- OpenAI Frontier is a significant architectural shift with stateful memory and enhanced multi-modal capabilities, accessed via a new `v2` API endpoint.

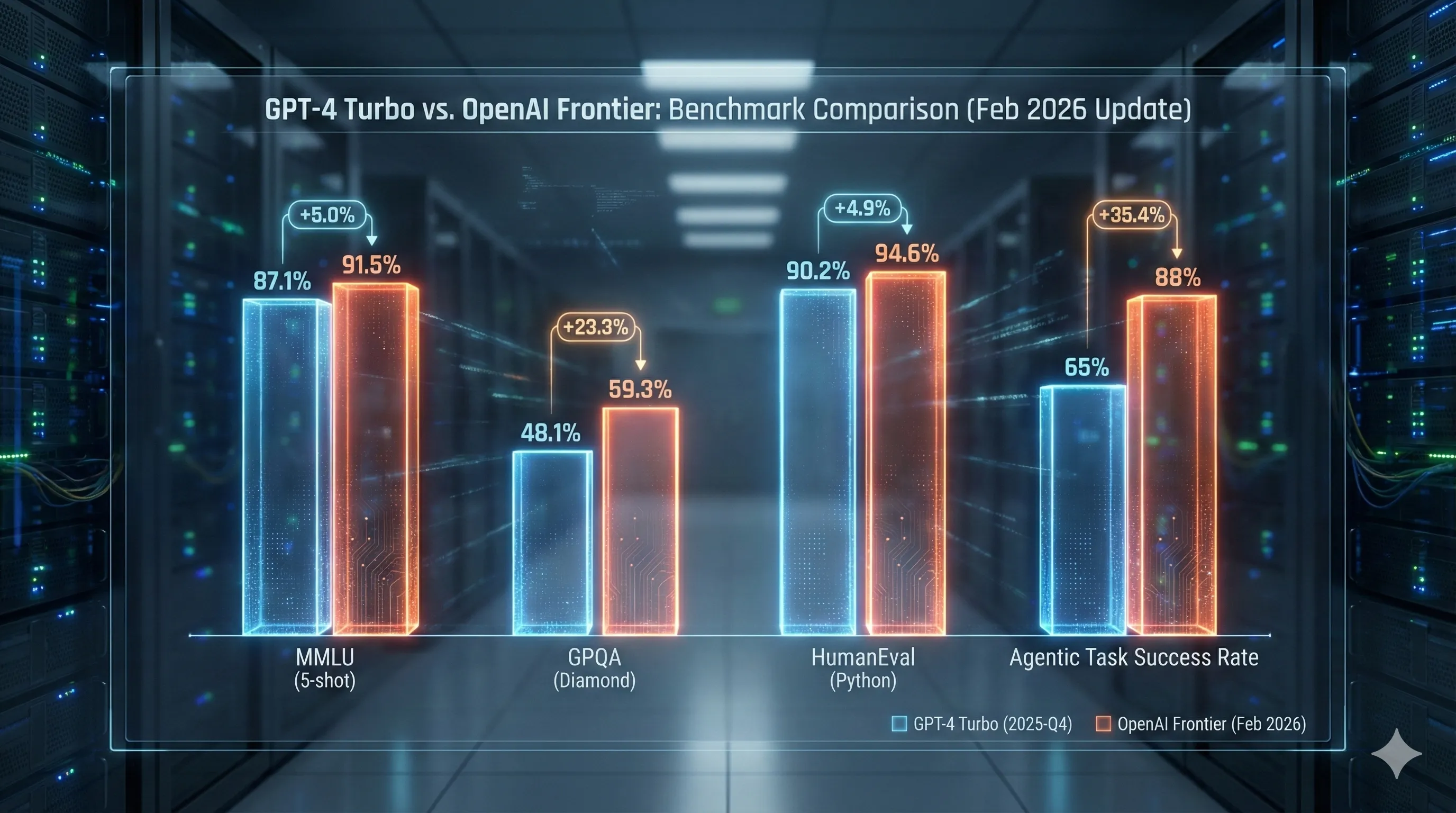

- It delivers marked improvements in complex reasoning and agentic tasks (+23-35% on specific benchmarks), though with slightly slower initial response times for simple requests.

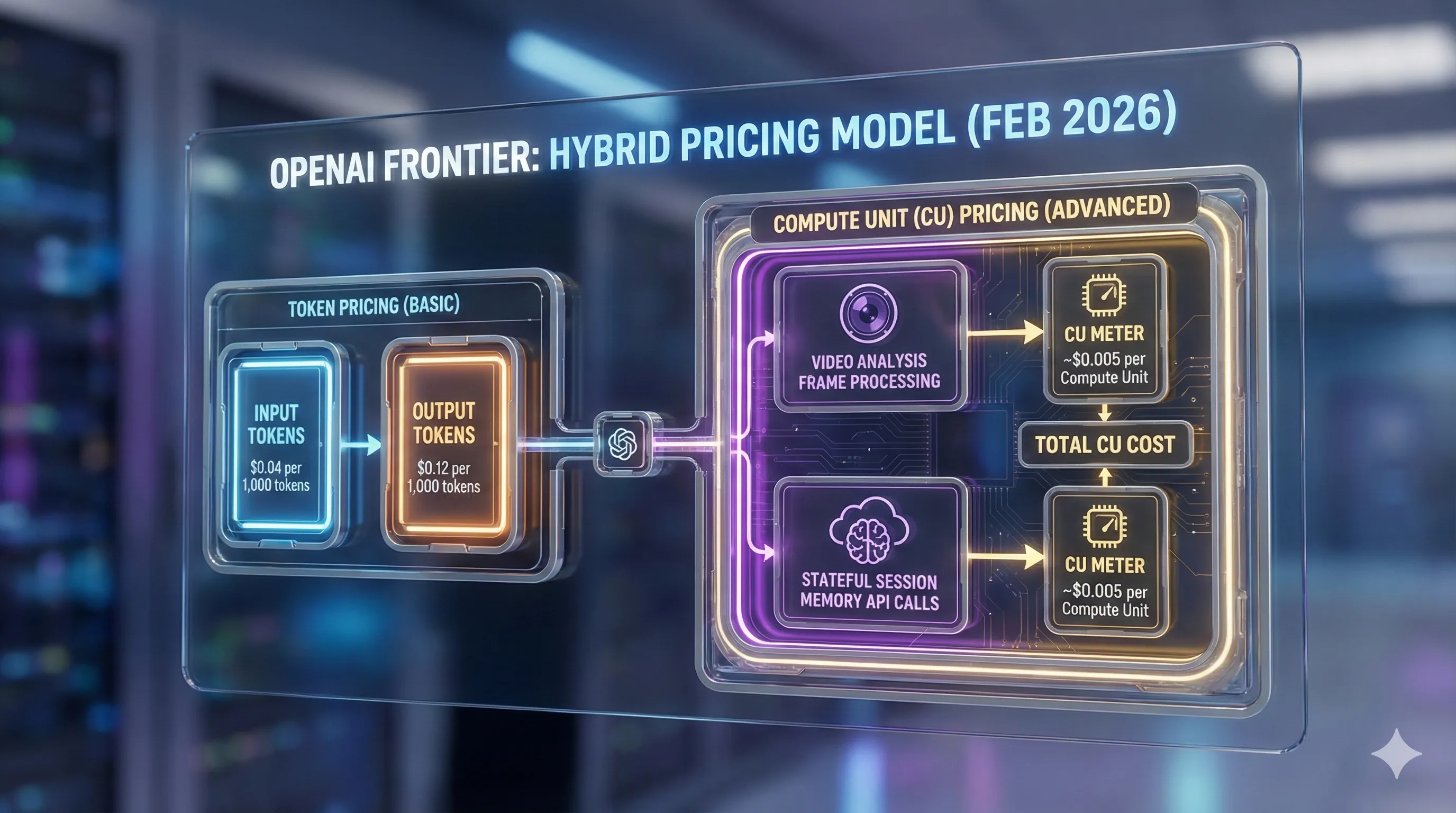

- Frontier introduces a hybrid pricing model (tokens + Compute Units), leading to a 3-4x cost increase for basic tasks, making its value proposition clearest for complex, multi-modal, or agentic systems.

- Migration is non-trivial, requiring developers to adapt to a new `v2` API, manage `session_id` for stateful interactions, and rethink workflows around 'session engineering'.

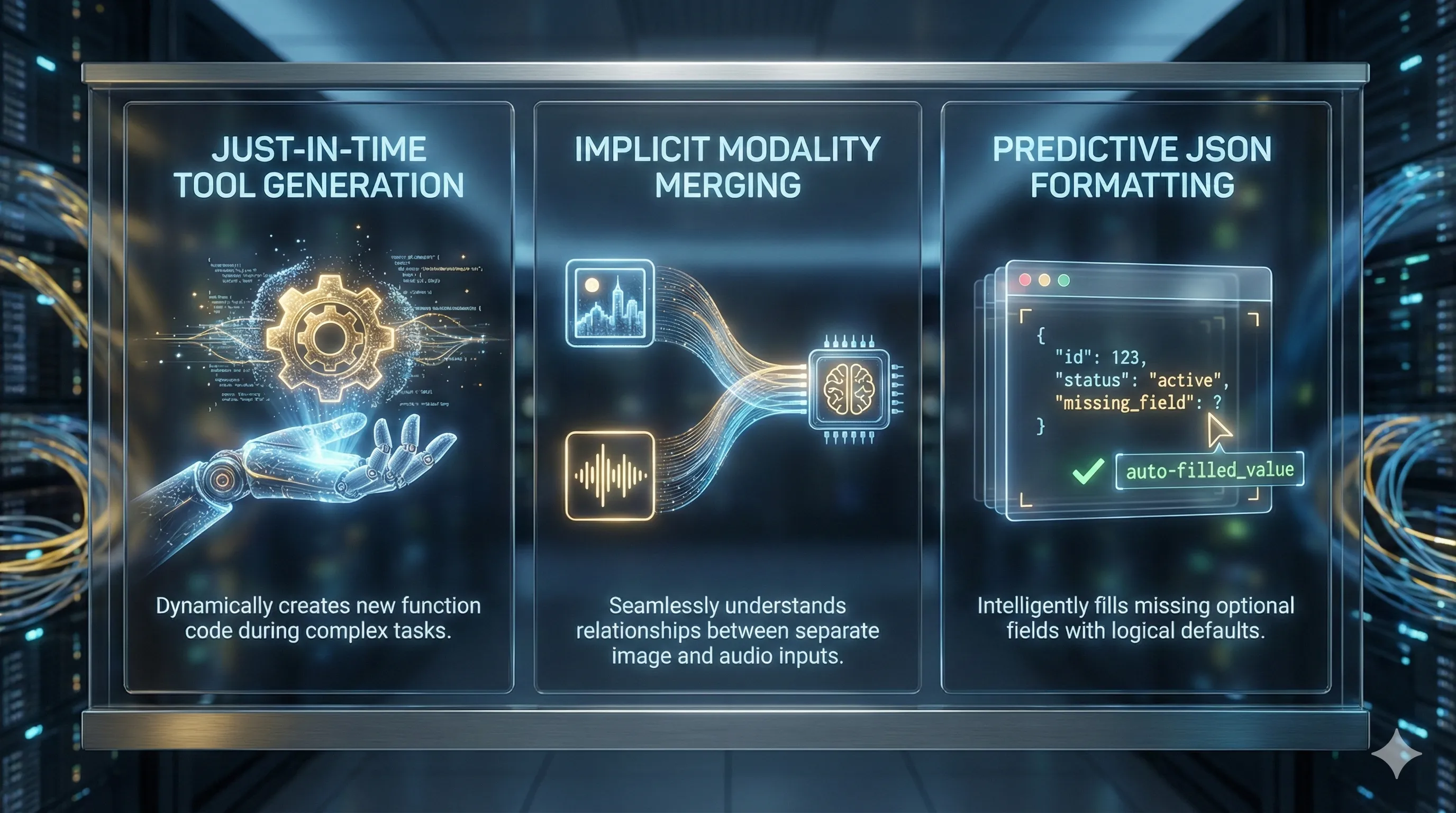

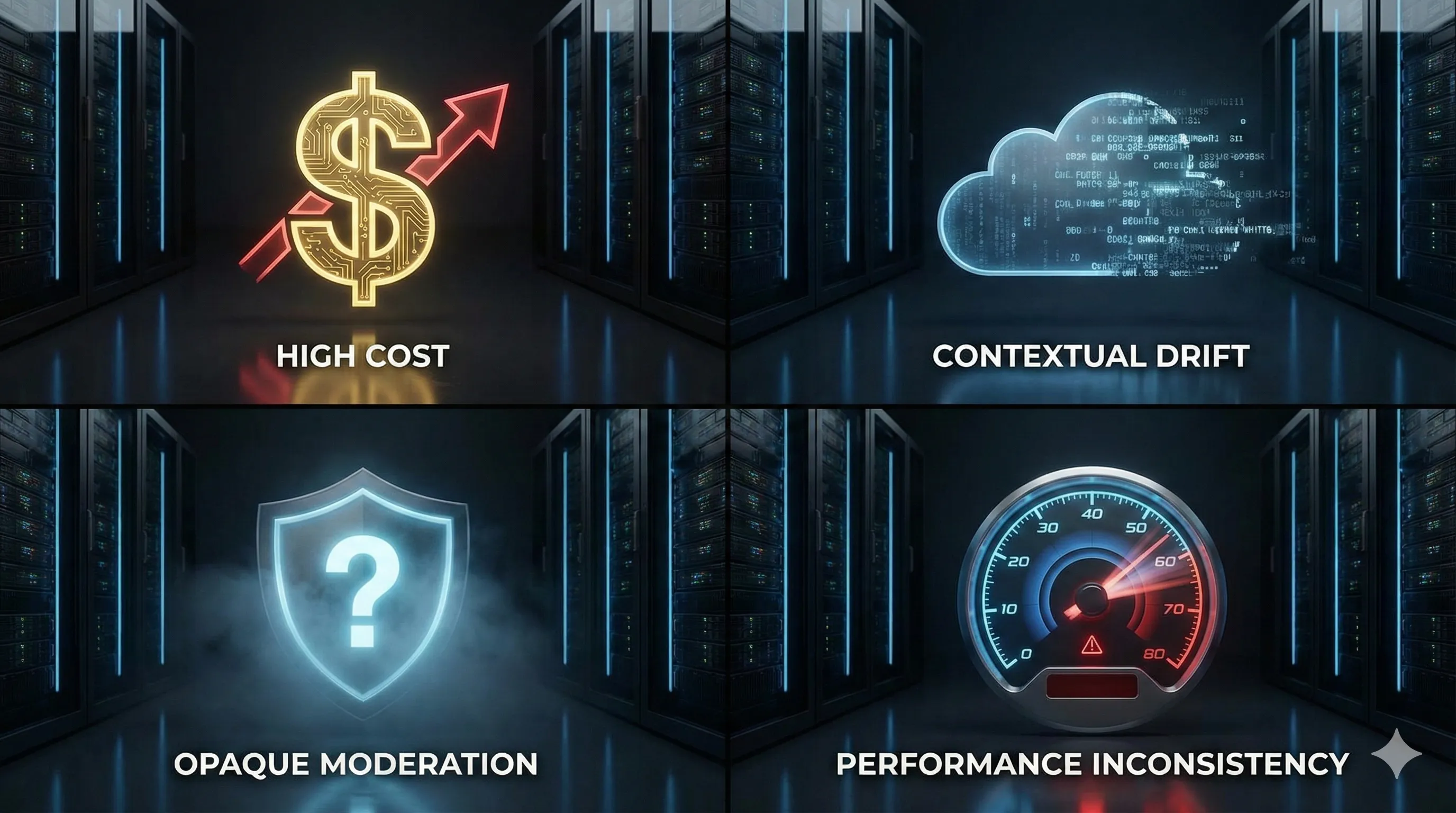

- While powerful, early adopters cite high cost, potential contextual drift in long sessions, and opaque safety explanations as primary criticisms, alongside community reports of undocumented capabilities like 'Just-in-Time' tool generation.

OpenAI Frontier: A Critical Look at the Latest Version Update (Feb 2026)

OpenAI has released Frontier, a significant version update in early 2026, marking a substantial architectural leap for their models.

This guide dives into the new capabilities, API endpoint changes, and developer workflow adjustments required to effectively integrate Frontier into your projects.

As experienced users, we'll cut through the marketing to give you an honest appraisal of whether this update justifies its cost and complexity for your specific use cases.

Real-World Performance Benchmarks: Is Frontier Worth the Hype?

OpenAI positions Frontier not as a simple iteration, but as a new foundational series, bringing stateful memory and advanced multi-modal capabilities to the forefront.

Independent benchmarks and developer feedback confirm significant performance gains over GPT-4 Turbo, though not uniformly across all metrics.

For tasks requiring complex, multi-step reasoning, Frontier truly shines, demonstrating higher accuracy and fewer logical contradictions in extended generations.

Benchmarks like GPQA and MMLU show clear improvements, translating directly into more reliable outputs for intricate problems.

However, speed presents a mixed picture.

Simple text-in, text-out operations can see Frontier's time-to-first-token latency increase by about 15% compared to GPT-4 Turbo.

Yet, for agentic tasks involving tool use and internal planning, Frontier's overall execution time is considerably faster due to its more efficient internal architecture.

This makes it a strong contender for automating complex workflows where total task completion time is critical.

Resource consumption is also a factor, particularly for enterprise partners considering self-hosted deployments.

Frontier models require nearly double the VRAM compared to GPT-4 class models for similar throughput, indicating a substantially larger model under the hood.

| Benchmark | GPT-4 Turbo (2025-Q4) | OpenAI Frontier (Feb 2026) | % Improvement |

|---|---|---|---|

| MMLU (5-shot) | 87.1% | 91.5% | +5.0% |

| GPQA (Diamond) | 48.1% | 59.3% | +23.3% |

| HumanEval (Python) | 90.2% | 94.6% | +4.9% |

| Agentic Task Success Rate* | 65% | 88% | +35.4% |

Note: 'Agentic Task Success Rate' is a new composite benchmark measuring the model's ability to complete a multi-tool, multi-step goal without human intervention. Data is aggregated from early community tests.

The New Price Tag: A Critical Look at Frontier's Cost-Benefit for Developers

Frontier's launch introduced a significantly higher price point and a revamped pricing structure, moving beyond simple token counts to incorporate 'Compute Units' (CUs) for advanced features.

This shift has sparked considerable debate within the developer community.

The new standard API pricing for input tokens is $0.04 per 1,000 tokens, a notable increase from GPT-4 Turbo's approximate $0.01.

Output tokens see an even sharper rise, costing $0.12 per 1,000 tokens compared to GPT-4 Turbo's ~$0.03.

Beyond tokens, specific tasks like video frame analysis or using the stateful memory API are billed per Compute Unit, currently priced at around $0.005 per unit.

| Pricing Component | OpenAI Frontier (Standard API) | GPT-4 Turbo (Approx. Comparison) |

|---|---|---|

| Input Tokens | $0.04 per 1,000 tokens | ~$0.01 per 1,000 tokens |

| Output Tokens | $0.12 per 1,000 tokens | ~$0.03 per 1,000 tokens |

| Compute Units (CU) | Billed for specific tasks (e.g., Video Frame Analysis, Stateful Memory API) at ~$0.005 per unit. | Not applicable (different model architecture). |

For straightforward chatbot applications, Frontier represents a 3-4x cost increase for what might be only a marginal improvement in text quality, making it less appealing.

The clear value proposition emerges for businesses building complex, multi-modal, or agentic systems.

Workflows that previously required orchestrating multiple GPT-4 calls, external vector databases, and custom state management can now be consolidated into a single, albeit more expensive, stateful Frontier call.

In these advanced scenarios, the Total Cost of Ownership (TCO) could potentially decrease due to simplified architecture and development.

API Migration Headaches: What Developers Need to Know for Frontier Adoption

Migrating to OpenAI Frontier is not a simple model name change; it's a non-trivial process involving breaking API changes and a shift in architectural thinking.

Frontier operates exclusively through a new `v2` API endpoint, demanding significant updates to existing codebases.

The most critical change is the new endpoint: all calls must now target `/v2/chat/completions`.

The legacy `/v1` endpoint simply does not support Frontier.

A key new feature is the optional `session_id` parameter, which allows OpenAI to manage conversational memory on their side, freeing developers from continually passing entire chat histories.

This fundamental shift requires re-architecting applications from stateless request-response cycles to a session-based interaction model, which has been identified as the largest challenge by early adopters.

Furthermore, the `tools` parameter now demands a more rigid JSON schema and introduces a `tool_choice` object, offering granular control over which tools the model can utilize.

Legacy parameters, such as `logit_bias`, have been refined and nested under a new `generation_params` object.

Here’s a look at the migration for the `openai` Python SDK v2.0:

# Old Way (GPT-4)

# response = client.chat.completions.create(

# model="gpt-4-turbo-2025",

# messages=[...]

# )

# New Way (Frontier)

# Note the new client initialization and session_id parameter

response = client.v2.chat.completions.create(

model="frontier-2026-02-base",

session_id="user_session_12345",

messages=[

{"role": "user", "content": "What was the last thing I asked you about?"}

]

)

This shift to 'session engineering' means developers must strategize optimal session lengths, when to inject summaries into memory, and when to terminate sessions to manage costs and prevent 'contextual drift'.

Beyond the Docs: Uncovering Frontier's Hidden Capabilities

Community exploration on platforms like the OpenAI Dev Forum has quickly unearthed capabilities in Frontier that weren't prominently featured in the initial release notes.

These discoveries hint at the model's deeper, undocumented intelligence.

One intriguing, though still 'rumored,' capability is 'Just-in-Time' Tool Generation.

Several users have reported instances where, during complex agentic tasks, Frontier seems to generate and execute simple, single-purpose Python functions within its sandboxed environment, even without these tools being explicitly provided in the prompt.

This suggests a sophisticated internal reasoning and code generation capability that adapts on the fly.

Another observed trait is Implicit Modality Merging.

While the documentation outlines how to feed video or audio data, experimenters have found that Frontier can infer relationships between separately provided inputs, such as an image and an audio file.

For example, it might reference a 'sound in the image' without explicit instructions to connect the two modalities, treating them as a more cohesive input than merely separate data streams.

Finally, Predictive JSON Formatting stands out.

The model demonstrates a near-perfect success rate in generating valid JSON adhering to a provided schema.

More remarkably, it appears to predict and fill in missing optional fields within a schema with logical defaults, a behavior not officially documented but incredibly useful for robust API integrations.

Early Feedback: Bugs, Criticisms, and Unexpected Behavior in Frontier

Initial reception for OpenAI Frontier is a blend of excitement over new potential and significant frustration over practical challenges.

Users are actively reporting a spectrum of issues.

The primary criticism revolves around cost.

The substantial price increase is a major barrier for many independent developers and startups, who feel priced out of using the flagship model for anything beyond their most critical, high-value tasks.

This limits broader experimentation and adoption.

A common bug reported is 'contextual drift' in very long-running stateful sessions.

After more than 100 turns, some users observe the model subtly ignoring or forgetting earlier instructions and context, undermining the core benefit of persistent memory.

This necessitates careful session management and potentially manual re-injection of crucial context.

The new safety system, termed 'Reflexive Moderation,' is a source of controversy.

While more robust, it's also more opaque.

The model sometimes refuses seemingly harmless prompts with generic explanations, making it difficult for developers to debug and refine their inputs for creative or nuanced applications.

This lack of actionable feedback slows down development cycles significantly.

Lastly, performance inconsistency plagues some users.

While average latency is acceptable, specific queries—especially those involving complex tool use—can experience unexpected spikes, sometimes reaching up to 30 seconds.

This variability is problematic for real-time applications and user experience.

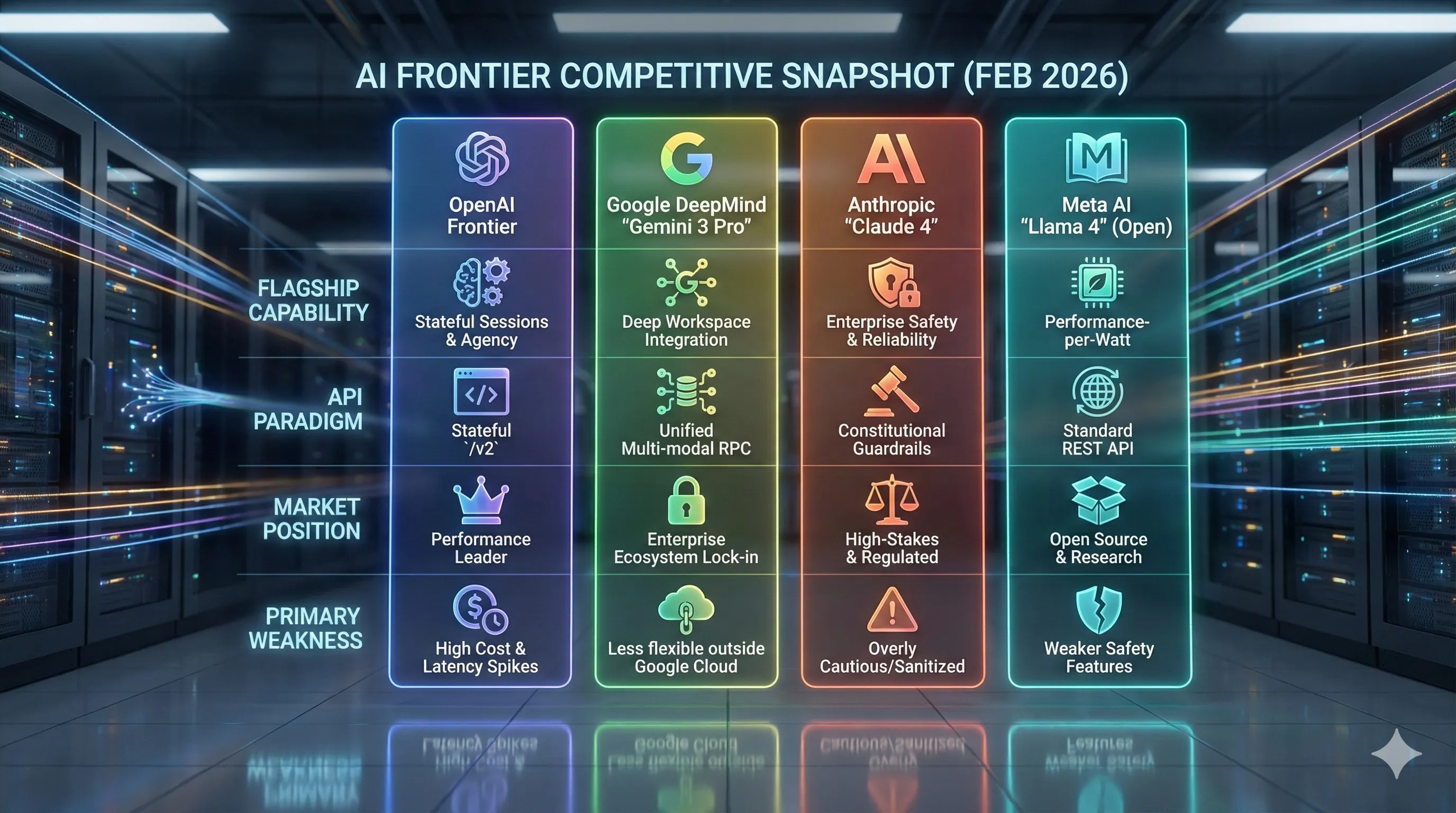

Frontier in the AI Race: A Competitive Snapshot (Early 2026)

OpenAI Frontier launches into a highly competitive AI landscape in early 2026, solidifying OpenAI's position at the cutting edge but not without fierce competition.

The market features strong offerings from Google DeepMind, Anthropic, and Meta AI, each with distinct strengths and target markets.

Frontier's flagship capabilities, stateful sessions and agency, differentiate it, allowing for sophisticated, multi-step interactions without constant context re-feeding.

However, its primary weakness lies in its high cost and occasional latency spikes, which limit its broad appeal.

Google DeepMind's 'Gemini 3 Pro' focuses on deep workspace integration, aiming to embed AI more seamlessly into enterprise ecosystems.

While powerful, it can be less flexible outside the Google Cloud environment.

Anthropic's 'Claude 4' continues to lead in enterprise safety and reliability, leveraging its constitutional guardrails for high-stakes and regulated industries, though sometimes criticized for being overly cautious.

Meta AI's 'Llama 4' targets the open-source and research communities, prioritizing performance-per-watt and offering greater transparency, albeit with weaker default safety features.

The competitive landscape highlights a segmentation of the AI market, where no single model dominates every aspect.

| Feature / Model | OpenAI Frontier | Google DeepMind 'Gemini 3 Pro' | Anthropic 'Claude 4' | Meta AI 'Llama 4' (Open) |

|---|---|---|---|---|

| Flagship Capability | Stateful Sessions & Agency | Deep Workspace Integration | Enterprise Safety & Reliability | Performance-per-Watt |

| API Paradigm | Stateful `/v2` | Unified Multi-modal RPC | Constitutional Guardrails | Standard REST API |

| Market Position | Performance Leader | Enterprise Ecosystem Lock-in | High-Stakes & Regulated | Open Source & Research |

| Primary Weakness | High Cost & Latency Spikes | Less flexible outside Google Cloud | Overly Cautious/Sanitized | Weaker Safety Features |

Note: Competitor model names and features are based on their latest announced versions and industry analysis as of Feb 2026.

Final Thoughts: Is Frontier Right for Your Project?

OpenAI Frontier represents a genuine next-generation model, trading simplicity and lower costs for unprecedented power in agentic and stateful applications.

While its performance gains in complex reasoning and multi-step tasks are undeniable, the significant price increase and migration effort are critical factors for any developer or business.

For those building intricate systems like advanced patient triage in healthcare, autonomous financial agents, or highly personalized educational tutors, Frontier's ability to maintain context over long sessions and execute complex agentic goals can drastically reduce development complexity and enhance capabilities.

However, for simpler applications or budget-constrained projects, the cost may outweigh the benefits, making older, more affordable models a more practical choice.

The success of Frontier will ultimately depend on whether the substantial value unlocked by its new features is enough for businesses to justify the steep investment in both price and engineering effort.