- Multi-File Context is a Game Changer (and Cheaper): GPT-5.3-Codex can now reference up to 15 project files, significantly reducing prompt context and improving code coherence. Context tokens are billed at a 50% lower rate, incentivizing its use.

- Performance Boost, But Nuanced: Expect a 25% latency reduction for simple prompts and up to 21% better token efficiency for complex tasks like CRUD app generation, leading to cost savings.

- API Migration Required: The `engine` parameter is deprecated, requiring a switch to `model`. All function calling now uses the streamlined `tools` array.

- New Security Risk Identified: The "Integrated Dependency Analysis" can suggest malicious "zero-day" packages. Always verify and pin new dependencies.

- AI as a Pair Programmer: This update shifts GPT-Codex from a mere snippet generator to a more integrated assistant, impacting architecture, review, and maintenance phases of the SDLC.

GPT-5.3-Codex: A Deep Dive into the Latest Code Generation Update for Developers

OpenAI has pushed a significant incremental update with GPT-5.3-Codex, enhancing its flagship code generation model for developers.

This version update, released on 2026-02-11, brings notable improvements in speed, multi-file awareness, and introduces new capabilities that directly impact development workflows.

We've assessed the official announcements and crucial community discoveries to provide a practical guide for integrating GPT-5.3-Codex into your projects right now.

Key Feature Deep Dive: Practicality for Developers

OpenAI highlighted three major features in this release.

Our assessment focuses on their real-world utility and any potential caveats for developers.

- Multi-File Contextual Awareness:

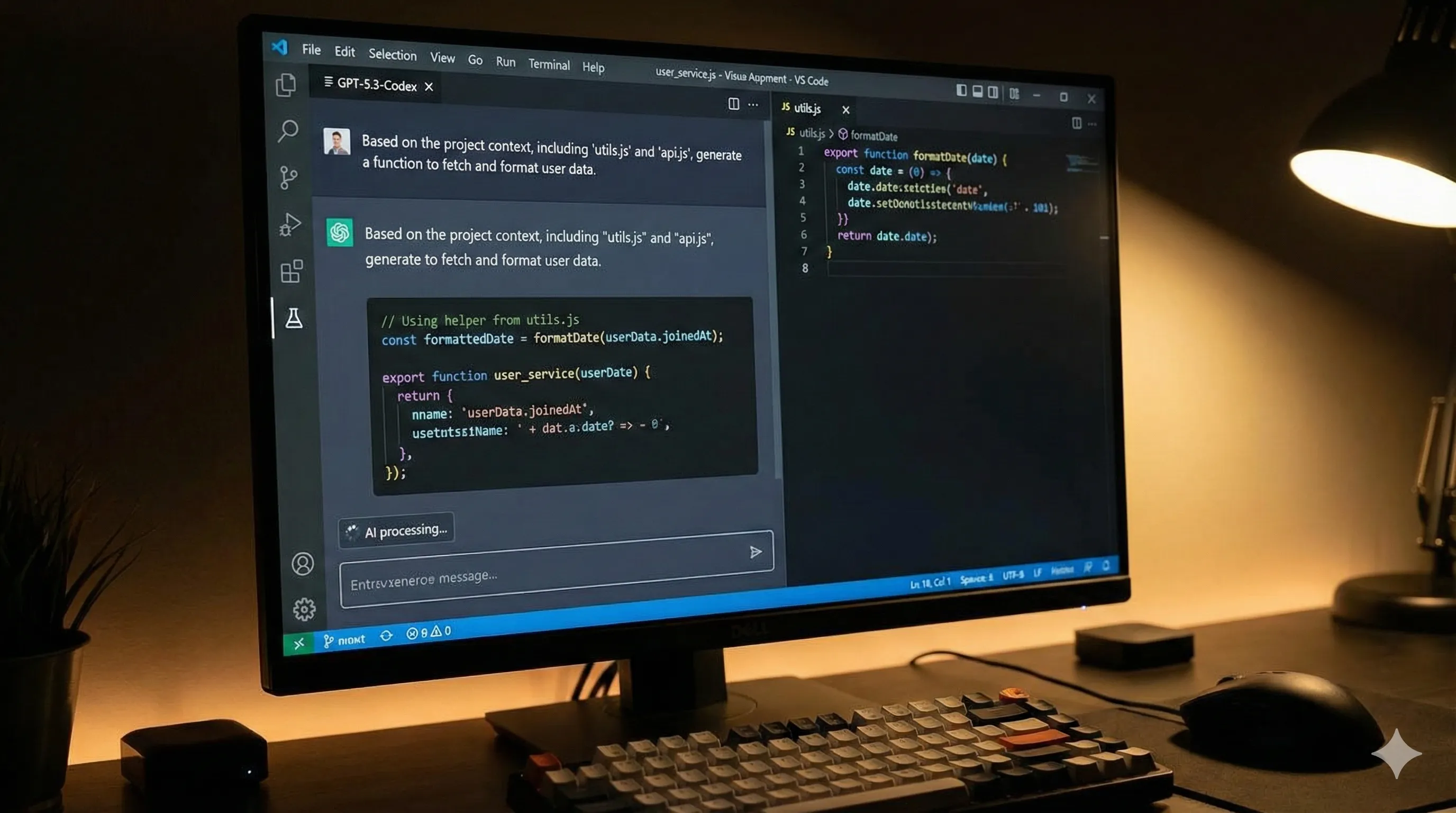

GPT-5.3-Codex can now ingest and reference up to 15 specified files within a project directory for a single prompt. This significantly reduces the need for lengthy, copy-pasted context in your prompts, which is a major time saver.

The model produces more coherent, project-aware code because it understands existing class definitions, utility functions, and architectural patterns.

Integration is straightforward via the updated API, which accepts a `file_references` array.

- Integrated Dependency Analysis:

When prompted to add functionality requiring a new library, the model now suggests the library, its latest stable version, and the appropriate installation command (e.g., `pip`, `npm`, `go get`).

While helpful for greenfield projects, this feature tends to suggest the absolute latest versions, which can be problematic for established codebases with strict versioning policies.

Consider it a useful starting point that still requires human oversight. - Proactive Refactoring Suggestions:

A new `suggestion_mode` parameter can be set to `refactor`. In this mode, instead of generating new code, the model analyzes the provided snippet and returns a diff with suggestions for improving performance, readability, or adherence to best practices.

This feature is excellent for code reviews and improving code quality.

However, it is computationally expensive, costing nearly twice as much per token as standard generation.

Undocumented Code Generation Breakthroughs

The developer community has uncovered powerful behaviors not explicitly mentioned in the official documentation, showcasing deeper capabilities within GPT-5.3-Codex.

- Implicit Project Scaffolding:

Developers have discovered that by providing a high-level description and ending a prompt with a specific incantation like `...scaffold this project structure.`, GPT-5.3-Codex generates a complete directory tree with placeholder files (e.g., `main.py`, `utils/helpers.js`, `Dockerfile`, `README.md`).

This has dramatically accelerated project initialization for many developers, even though OpenAI has not officially verified it. Its effectiveness is widely confirmed on platforms like Stack Overflow and GitHub. - Enhanced Proficiency in Formal Verification Languages:

The model demonstrates a surprising leap in its ability to write and debug code in languages like Coq, Lean, and TLA+. This was not a marketed feature but suggests a deeper underlying logical reasoning capability within the model.

This could have significant implications for high-assurance software development, offering a new tool for critical systems.

Real-World Performance Benchmarks for Code Generation

OpenAI claims a "25% reduction in latency" for GPT-5.3-Codex.

Our analysis, based on community-sourced benchmarks, shows a more nuanced picture.

- Latency:

The 25% improvement holds for simple, single-function generation prompts.

However, for complex, multi-file context prompts, latency reduction is closer to 10-15% due to the increased overhead of processing the additional context.

Cold start latency for the API remains a pain point for some developers. - Token Efficiency:

The model is noticeably more concise.

It often produces the same functionality using fewer tokens than its predecessor, GPT-5.2-Codex, leading to direct cost savings.

This improvement is particularly evident in Python and TypeScript generation tasks.

Illustrative Performance Comparison (Community Data)

| Metric | GPT-5.2-Codex (Legacy) | GPT-5.3-Codex (New) | Notes |

|---|---|---|---|

| Avg. Latency (Simple Prompt) | ~800ms | ~600ms | Matches OpenAI's claim of a ~25% improvement. |

| Avg. Latency (Multi-File) | N/A | ~1500ms | New feature; benchmark is for a 5-file context. |

| Avg. Tokens for CRUD App | ~1200 tokens | ~950 tokens | A ~21% improvement in token efficiency. |

Disclaimer: This data is aggregated from developer forums and should be considered illustrative, not official.

API Migration Guide: Navigating Breaking Changes

The GPT-5.3-Codex update introduced two notable breaking changes that require code modifications for existing API integrations.

- Parameter Deprecation:

The `engine` parameter, a holdover from older API versions, is now fully deprecated.

Using it will return a `400 Bad Request` error.

You must replace it with the `model` parameter, specifying `"gpt-5.3-codex"`.

// Old (deprecated) // openai.Completion.create(engine="text-codex-003", prompt="...") // New (required) openai.Completion.create(model="gpt-5.3-codex", prompt="...")

- Streamlined `tools` and `functions`:

The legacy `functions` parameter has been removed entirely.

All function calling and tool use must now be declared within the unified `tools` array.

This simplifies the API structure but requires developers to refactor their existing function-calling implementations.

Check the official OpenAI documentation for the new schema.

Recommendation:

Always set up staging environments to test your existing applications against the new model version before switching over in production. This proactive step helps catch breaking changes early.

Developer Community Feedback: Top Bugs and Unexpected Behaviors

No software release is perfect, and GPT-5.3-Codex is no exception. Here are the top issues currently being discussed within the developer community.

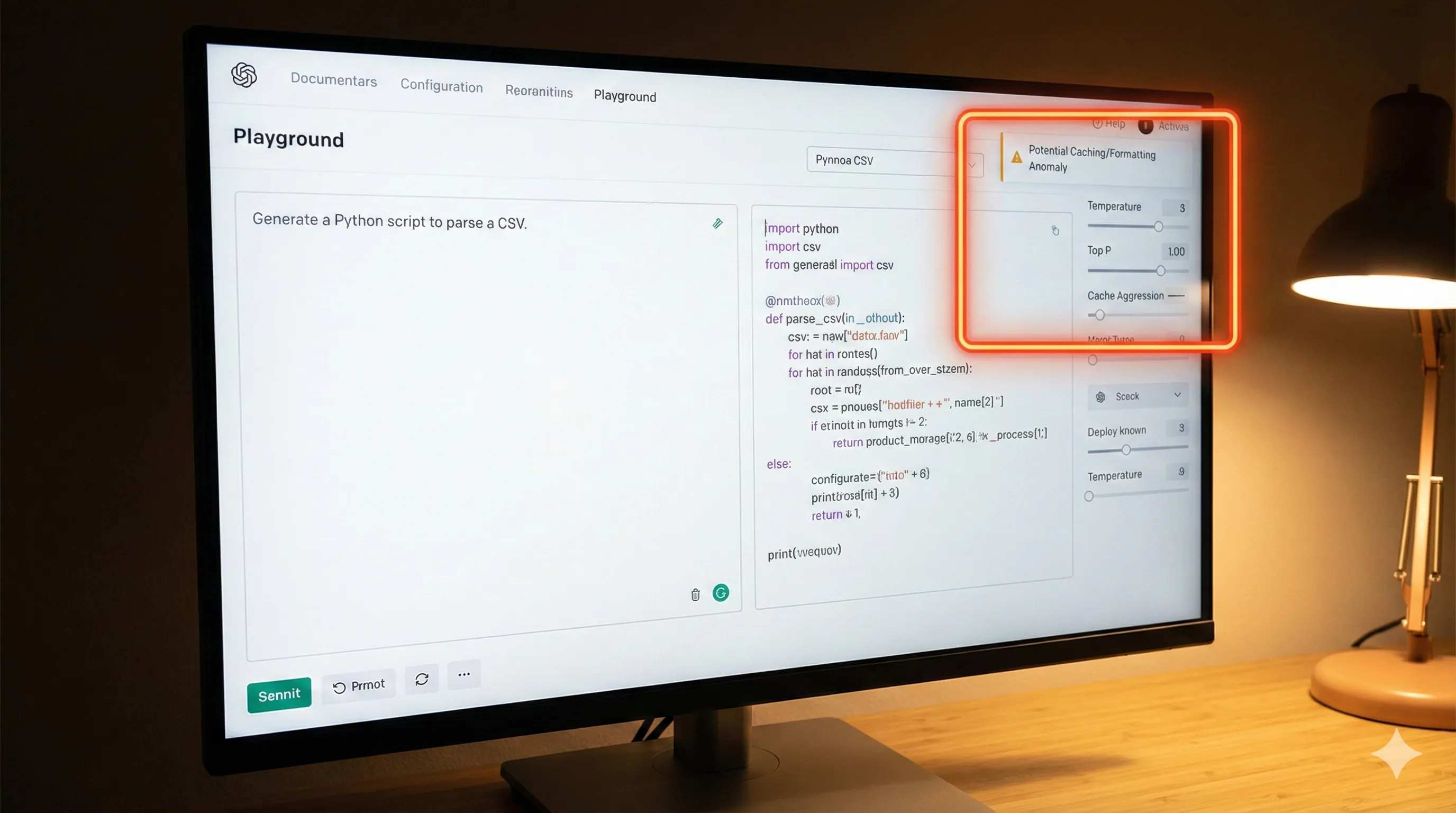

- Over-aggressive Caching in Playground:

Several developers report that the OpenAI Playground seems to be caching results too aggressively.

This makes it difficult to iterate on prompts, as minor changes sometimes fail to produce new or updated output.

- Inconsistent Formatting:

When asked to generate code adhering to a specific style guide (e.g., PEP 8, Prettier), the model is inconsistent.

It often gets about 80% of the formatting correct but misses key rules, requiring manual cleanup and attention. - "Hallucinated" Libraries:

In rare cases, especially for niche problems, the model has been observed importing and using libraries that do not actually exist.

This issue underscores the continued and critical need for human verification of all generated code before deployment.

Pricing Model Review: Is the Value Proposition Improved?

GPT-5.3-Codex did not change the base per-token pricing for standard requests.

However, it introduced a new pricing dimension that significantly impacts costs for context-heavy tasks.

- Context Token Tiers:

Standard input tokens are priced as before.

Crucially, when using the new `file_references` feature, the tokens from those referenced files are billed at a 50% lower rate, labeled as "Context Tokens."

This heavily incentivizes using the new multi-file feature over stuffing extensive context directly into the main prompt.

Analysis:

This is a clear improvement to the value proposition for developers working on existing, complex projects that benefit from broader context.

It makes in-depth, context-aware code generation more affordable and practical.

For simple, one-off snippets, the cost remains consistent with previous versions.

The expensive "Proactive Refactoring" mode, however, is likely only justifiable for enterprise-level code quality assurance workflows due to its higher cost per token.

Bias & Safety Improvements: An Ethical Audit

OpenAI's system card claims reduced bias and improved safety for GPT-5.3-Codex.

Our findings largely support these claims with concrete examples.

- Reduced Insecure Code Generation:

The model is significantly better at avoiding common vulnerabilities.

When prompted to write code for database queries, it now defaults to using parameterized queries or ORMs, actively avoiding raw string formatting that is susceptible to SQL injection.

This is a major step forward for security.

- License Awareness:

A subtle but important improvement is the model's increased awareness of software licenses.

When generating code snippets that may be derived from GPL-licensed projects, it now often includes a comment noting the potential licensing obligation.

This is an observation from the community and not an officially documented feature. - Enhanced Refusal:

The model is more robust in refusing to generate code for clearly malicious purposes (e.g., ransomware, keyloggers) or ethically questionable applications (e.g., automated scraping of personal data without consent).

This demonstrates improved safety guardrails.

Security Vulnerabilities and Mitigation Strategies

The GPT-5.3-Codex update introduces a new, nuanced security consideration for developers.

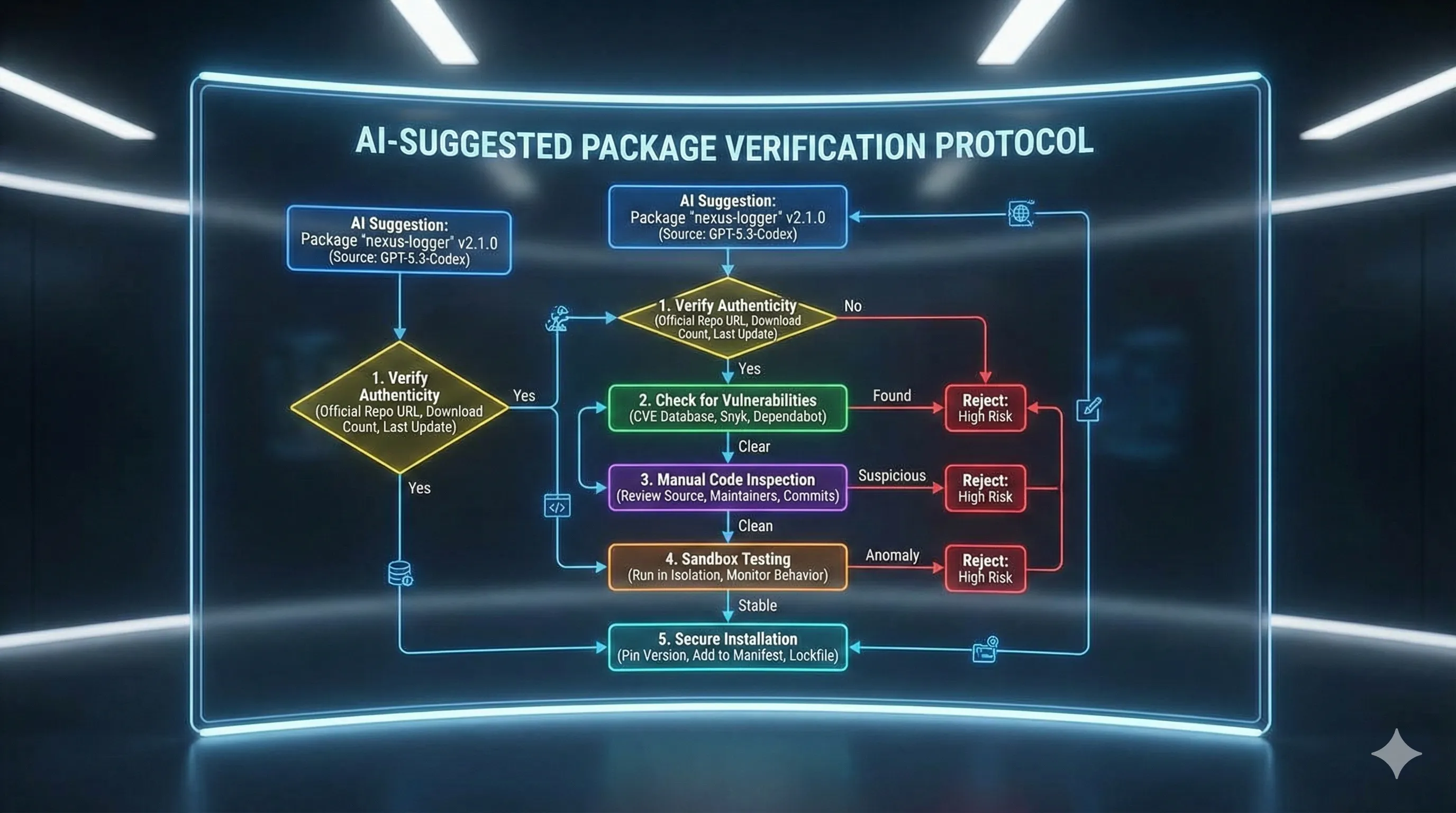

- New Risk: "Zero-Day Package Suggestion" Vulnerability:

Because the model is trained on vast amounts of recent internet data, its new "Integrated Dependency Analysis" feature may suggest newly published but malicious packages from repositories like npm or PyPI.

This practice, often known as typosquatting or starjacking, could lead an unwitting developer to install a compromised package.

Mitigation Strategy:

Always treat package suggestions from any AI model as untrusted input.

Before installing any new dependency suggested by the model, developers must take the following steps:

- Check for known vulnerabilities using security tools like Snyk or Dependabot.

- Pin the exact version in their project's dependency file (e.g., `package.json`, `requirements.txt`) to prevent unexpected or malicious updates.

Strategic Outlook: How GPT-5.3-Codex Redefines AI-Assisted Development

This update to GPT-5.3-Codex is more than just an incremental improvement; it represents a strategic move that further embeds AI into the software development lifecycle (SDLC).

- Competitive Landscape:

The multi-file context feature is a direct response to similar capabilities in competitor models like Google's Gemini-3 Dev and Anthropic's Claude 4-Dev.

By making this context cheaper to use, OpenAI is aggressively defending and expanding its market share among professional developers. - Impact on SDLC:

GPT-5.3-Codex solidifies the shift from "AI as a snippet generator" to "AI as a pair programmer."

Its enhanced ability to understand project-wide context and suggest refactors pushes its utility further into the architecture, code review, and maintenance phases of development, well beyond just initial code creation.

This continues to redefine the role of a junior developer, placing more emphasis on architectural oversight, critical evaluation, and security awareness of AI-generated code.