🚀 Key Takeaways

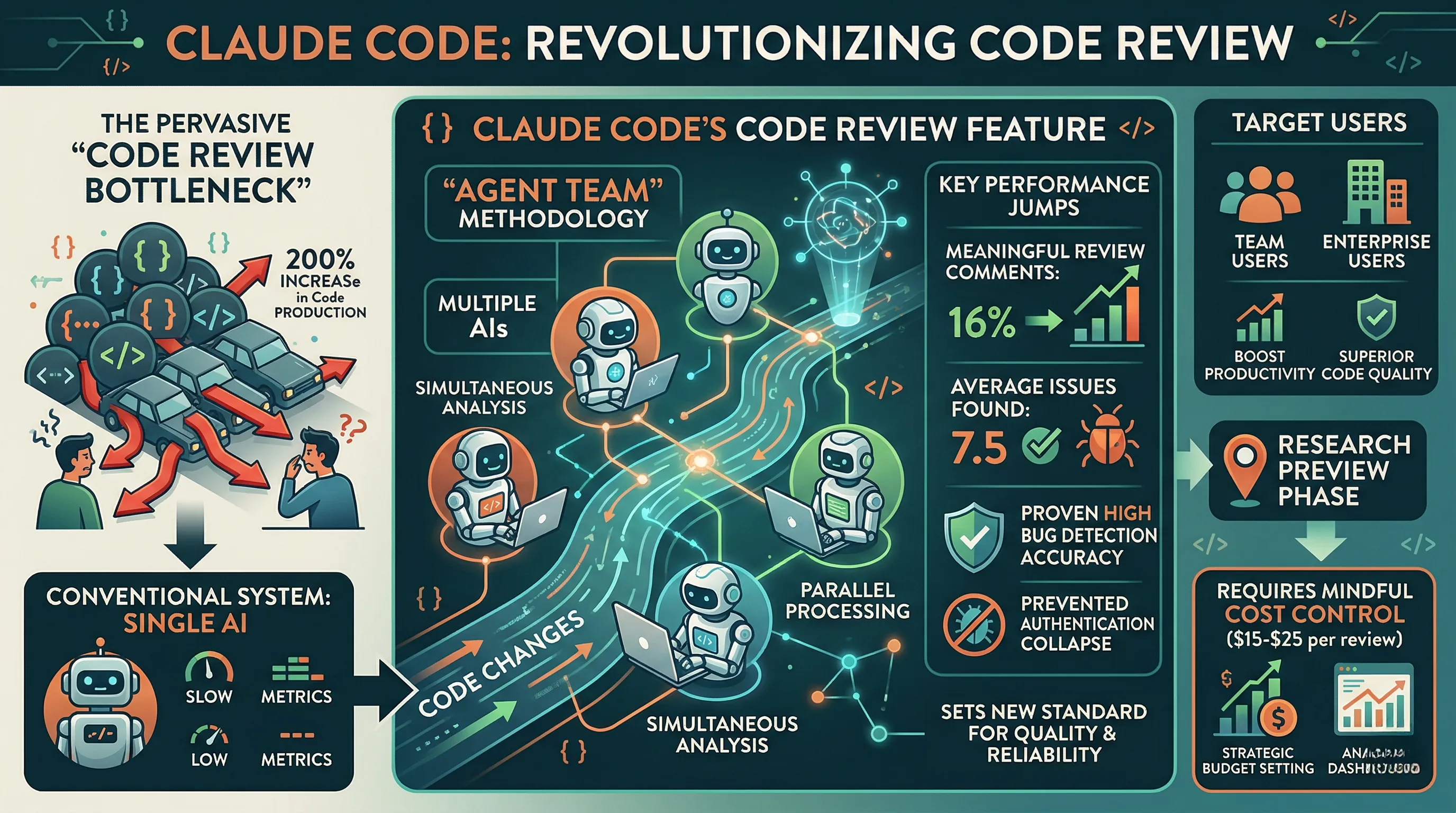

- Claude Code's Code Review Feature dramatically elevates code review quality, boosting meaningful comment ratios from 16% to 54% and finding an average of 7.5 issues in large code changes.

- It provides unparalleled accuracy in bug detection, capable of identifying critical errors that could compromise entire authentication systems.

- By leveraging an innovative AI 'agent team' for simultaneous analysis, it effectively addresses the industry-wide code review bottleneck, ensuring reliable and thorough reviews for every Pull Request.

- While offering advanced analysis, the feature averages $15-$25 per review, necessitating strategic cost control measures like budget setting and analysis dashboards.

Anthropic introduces Claude Code's Code Review Feature, a groundbreaking solution designed to revolutionize the software development lifecycle.

This innovative tool directly addresses the pervasive "code review bottleneck," a critical challenge exacerbated by a 200% increase in developer code production over the last year, ensuring that deep code analysis and the detection of hidden bugs become seamless and efficient.

Unlike conventional systems, Claude Code employs an advanced 'agent team' methodology, where multiple AIs simultaneously analyze code changes, rather than relying on a single AI.

This parallel processing capability not only drastically improves the meaningful review comment ratio from 16% to an impressive 54% but also consistently finds an average of 7.5 issues in large code changes.

Its high accuracy in bug detection is proven, having identified critical errors that could have led to authentication system collapse, setting a new standard for code quality and reliability.

Currently in a research preview phase, this feature is poised to become an indispensable asset for team users and enterprise users seeking to simultaneously boost developer productivity and maintain superior code quality.

While offering unparalleled depth, it requires mindful cost control, averaging $15-$25 per review depending on code size and complexity, emphasizing the need for strategic budget setting and analysis dashboards.

1. Beyond the Surface: How Anthropic's AI Agent Team Reimagines Code Review

🔹 From Monolith to Microservices: The AI Agent Swarm

Anthropic’s approach deviates fundamentally from single-model AI analysis by deploying a specialized 'agent team' to scrutinize code changes.

This isn't one AI trying to do everything; it’s a coordinated system where multiple AIs operate in parallel, each with a distinct task.

One agent might focus on identifying potential race conditions, while another simultaneously hunts for logic flaws, and a third specializes in spotting security vulnerabilities.

This parallel process is followed by a crucial filtering stage, where agents cross-reference findings to eliminate false positives and then collaboratively prioritize the identified bugs based on severity.

🔹 Beyond Syntax: The Shift to Semantic Bug Hunting

This multi-agent architecture directly addresses the critical bottleneck that has emerged from a 200% increase in developer code production over the last year.

Instead of a cursory check, teams get a deep, reliable analysis for every single Pull Request, transforming the review process from a chore into a robust safety net.

The impact is tangible: the ratio of meaningful review comments skyrockets from a mere 16% to an impressive 54%.

This system unearths an average of 7.5 significant issues in large code changes and has demonstrated its value by flagging critical errors, such as one that could have collapsed an entire authentication system.

For enterprise users, this translates into a powerful assurance that even with accelerated development cycles, code quality and system stability are not compromised.

🔹 The Enterprise Calculus: Weighing Cost Against Catastrophe

While the system's power is undeniable, its advanced analysis comes with a notable financial footprint.

The average cost per review is estimated between $15 and $25, a figure that fluctuates based on the size and complexity of the code being analyzed.

This positions the feature as a premium, enterprise-grade tool rather than a universally applied utility.

Industry analysts suggest that successful implementation will hinge on strategic cost control measures.

Best practices will likely involve setting firm budgets, applying the feature selectively to critical repositories, and utilizing analysis dashboards to monitor return on investment.

2. Shaking the Status Quo: How Anthropic's Innovation Reshapes Developer Workflows

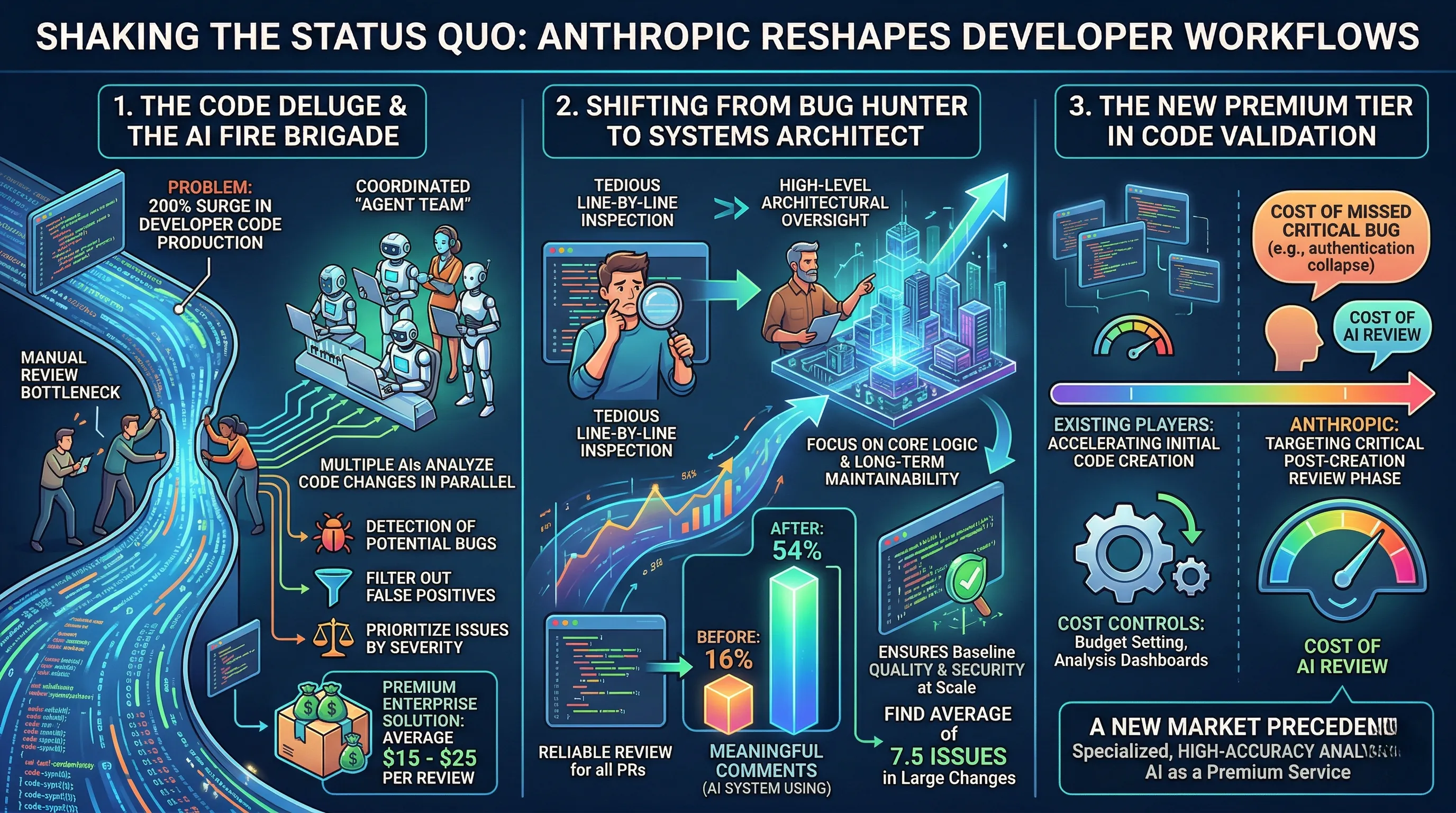

🔹 The Code Deluge and the AI Fire Brigade

Developer code production has surged by an unprecedented 200% in the last year, creating a severe bottleneck in the manual code review process.

Anthropic's answer is not a single AI assistant but a coordinated 'agent team' designed for deep code analysis rather than superficial, quick-glance reviews.

This system operates by having multiple AI agents analyze code changes in parallel, systematically detecting potential bugs, filtering out false positives, and then prioritizing the identified issues by severity.

The cost structure reflects this intensive approach, with an average price of $15-$25 per review, positioning it as a premium, enterprise-grade solution.

🔹 Shifting from Bug Hunter to Systems Architect

The direct impact on workflow is a fundamental role change for human developers, moving them from tedious line-by-line inspection to high-level architectural oversight.

By providing a reliable review system for all Pull Requests, the tool ensures a baseline of quality and security that was previously difficult to maintain at scale.

Internal data shows the ratio of meaningful review comments skyrockets from 16% to 54% when using the tool, as developers are freed from catching minor errors to focus on substantive improvements.

Finding an average of 7.5 issues in large code changes, this AI system handles the granular work, allowing senior engineers to dedicate their expertise to the core logic and long-term maintainability of the codebase.

🔹 The New Premium Tier in Code Validation

This feature carves out a distinct and strategic niche in the competitive AI landscape, focusing on code validation rather than just generation.

While existing market players have focused on accelerating initial code creation, Anthropic is targeting the critical, and often more costly, post-creation review phase.

The pricing model and requirement for cost controls like budget setting and analysis dashboards clearly indicate a tool built for enterprise teams where the cost of a single missed critical bug, such as a recently detected flaw that could have collapsed an authentication system, far outweighs the cost of the AI review.

This establishes a new precedent in the market: specialized, high-accuracy analytical AI as a premium service, forcing competitors to decide whether to compete on generation speed or validation depth.

3. The Numbers Speak: Expert Validation of Claude Code's Bug-Busting Prowess

🔹 Quantifying the Quality Leap

Early performance metrics from Anthropic's research preview are not just promising; they represent a significant statistical shift in code review efficacy.

The most striking figure is the increase in the meaningful review comment ratio, which has surged from a baseline of 16% to an impressive 54%.

For large, complex code changes, the tool identifies an average of 7.5 distinct issues, moving far beyond simple linting or style checks.

This quantitative output is backed by high accuracy, demonstrated in rigorous internal testing and real-world scenarios.

🔹 From Bottleneck to Breakthrough

The jump to a 54% meaningful comment ratio signals a profound change in the nature of code review, transforming it from a procedural check-box to a substantive quality gate.

This directly addresses the industry-wide code review bottleneck, a growing pain point for teams dealing with a 200% increase in developer code production over the past year.

Discovering 7.5 bugs before a merge means preventing those issues from ever reaching a staging or production environment, saving invaluable engineering hours typically lost to post-deployment hotfixes.

Ultimately, this provides a reliable, deep-analysis system for all pull requests, ensuring that even code submitted at the end of a sprint receives the same scrutiny as a flagship feature.

🔹 The Authentication System That Didn't Collapse

Expert analysis is coalescing around a key takeaway: this is less a review tool and more a systemic safety net for development teams.

The most-cited example from the preview is the tool's detection of a critical, non-obvious error that would have caused the total collapse of a user authentication system.

This single catch validates the feature's deep analytical approach, proving it can find the kind of hidden, architectural flaws that human reviewers, under pressure, might overlook.

The consensus is that by simultaneously boosting developer productivity and hardening code quality, Claude Code is positioned to become a key component of the modern enterprise software development lifecycle.

4. The Cost of Precision: Navigating Claude Code's Economic Realities

🔹 The Price Tag on Deep Analysis

The advanced analytical power of Claude Code's review feature comes with a significant financial consideration.

Anthropic has positioned the service with an average cost per review ranging from $15 to $25, a figure that fluctuates based on the size and complexity of the code being scrutinized.

This pricing model is a direct result of its underlying methodology; deploying an 'agent team' of multiple AIs for simultaneous, parallel analysis is a computationally intensive task far exceeding that of a single AI performing a quick scan.

Consequently, this is not a tool designed for rapid, low-cost checks but for comprehensive, deep-dive investigations into code changes.

🔹 Shifting from Line-Item Expense to Strategic Investment

For enterprise and team users, this cost structure fundamentally reframes AI-powered code review as a strategic investment rather than a simple operational expense.

Spending $25 to review a single pull request might initially seem steep, but its value is realized in risk mitigation.

The system's ability to find an average of 7.5 issues in large code changes and unearth critical errors—such as one that could have collapsed an entire authentication system—demonstrates a clear return on investment.

This cost becomes a form of insurance, safeguarding against the exponentially higher costs associated with deploying buggy code, including emergency patches, system downtime, and loss of customer trust.

🔹 Implementing Financial Guardrails for AI Adoption

Recognizing the potential for escalating costs, effective management is not just recommended; it's a prerequisite for sustainable use.

Anthropic's approach necessitates the implementation of strict cost control measures, which are expected to be integral to the platform.

Development teams must leverage tools for setting firm budgets to prevent runaway spending.

Furthermore, applying the feature on a repository-specific basis allows teams to target this high-powered analysis on mission-critical codebases while using less intensive methods elsewhere.

Comprehensive analysis dashboards will be essential for managers to monitor spending, track the tool's effectiveness, and ensure the financial outlay aligns with improvements in code quality and developer productivity.

5. Pioneering the Future: From Research Preview to Indispensable Dev Tool

🔹 The Transition from Experiment to Essential

Currently designated as a research preview, Claude Code's review feature is on a clear trajectory to become a cornerstone tool for enterprise development teams.

The immediate objective is to transition from its current experimental phase into a fully integrated solution that systematically boosts both developer productivity and overall code quality.

This evolution is critical to addressing the industry-wide code review bottleneck, a problem exacerbated by a reported 200% increase in developer code production over the last year.

The stated goal is to provide a highly reliable, automated review system for every single Pull Request submitted.

🔹 Balancing Power with Pragmatism: The Cost-Control Roadmap

The deep analysis provided by the feature's 'agent team' method comes at a tangible cost, with reviews averaging between $15 and $25 depending on code complexity.

To make this scalable for enterprise users, the roadmap anticipates sophisticated cost control measures.

Features like budget setting will empower organizations to manage expenditure predictably, eliminating surprise costs and simplifying financial planning for engineering departments.

The ability to apply the tool to specific repositories will allow teams to focus this high-powered analysis on their most critical codebases, maximizing return on investment.

Finally, the introduction of analysis dashboards will provide managers with the concrete data needed to demonstrate the tool's value, correlating review costs with a measurable reduction in production incidents.

🔹 The Long-Term Vision: An AI Co-Pilot for Code Integrity

The ultimate ambition for this technology extends beyond just catching bugs; it aims to fundamentally reshape the software development lifecycle.

By finding an average of 7.5 issues in large code changes and proving its ability to detect critical errors that could collapse an entire system, the tool is positioned as a proactive guardian of code health.

The long-term vision is for the AI to become an indispensable partner, ensuring a baseline of quality and security that allows senior developers to focus on high-level architectural decisions rather than routine review tasks.

This shift promises to not only accelerate development cycles but also to cultivate a higher standard of engineering excellence across the entire organization.

6. 💡 Tech Talk: Making Sense of the Jargon

- Agent Team: Imagine having a highly skilled team of detectives, where each detective focuses on a different part of a complex crime scene at the same time. Instead of one detective slowly sifting through everything, this 'agent team' tackles the investigation in parallel, making it much faster and more thorough.

- Code Review Bottleneck: Picture a busy highway with many cars (new code) trying to merge, but there are only a few slow inspectors checking each car. This creates a massive traffic jam, slowing down the entire journey (development process). The bottleneck is that slowdown caused by too much to check and too few checkers.

- Filtering False Positives: Think of a very sensitive smoke alarm that goes off not just for real fires, but also for steam from a hot shower or burnt toast. "False positives" are those unnecessary alarms. Filtering them means the alarm gets smarter, learning to ignore the harmless things and only alert you to actual dangers (real bugs), so you don't waste time on non-issues.

📚 Related Posts

Google Maps with Gemini AI: The Intelligent Lifestyle Platform

🚀 Key TakeawaysGoogle Maps transforms into an intelligent lifestyle platform by integrating with the powerful Gemini AI model, moving beyond simple navigation.Experience conversational exploration, where you can ask natural language questions for highly

tech.dragon-story.com

Future-Proofing Your Workflow with Cutting-Edge Tech for 2026

🚀 Key TakeawaysUnleash Peak Performance: Experience unparalleled speed and power for demanding tasks, from the Samsung Galaxy Book6 Ultra's RTX 5070 graphics to the LaCie Rugged SSD Pro5's 6700MB/s transfer rates, ensuring seamless creation and producti

tech.dragon-story.com

NVIDIA Unveils Nemotron 3 Super: A 120-Billion-Parameter Autonomous AI Model

🚀 Key TakeawaysNemotron 3 Super is a 120-billion-parameter autonomous AI model, designed as an independent agent to perform complex tasks.Achieves up to 5x faster processing and significantly reduced computation costs through optimized parameter activat

tech.dragon-story.com