🚀 Key Takeaways

- Cyber risks are escalating significantly, impacting critical infrastructure and leading to substantial financial losses across digital and telecom sectors, as evidenced by investor pullbacks in March 2026.

- New and sophisticated LLM jailbreak methods are emerging, including those associated with world models and the innovative GAP technique, posing fresh challenges to AI safety mechanisms.

- Significant AI security vulnerabilities have been identified in major platforms such as OpenAI's Codex (with critical supply chain implications) and ServiceNow, requiring urgent attention.

- Apple is tightening age verification controls on iPhones, demanding identity verification for downloads and setting a potential new standard, even in the absence of explicit legal mandates at the device level.

As March 2026 draws to a close, the digital landscape is marked by a pronounced escalation of cyber threats, posing formidable challenges across global sectors.

The "Cyber Risk Escalation" is profoundly impacting critical infrastructure and causing widespread GPS disruption in global shipping lanes, signaling a new level of sophistication in threat activities.

Financial markets are feeling the pinch, with S-RM reporting that 65% of investors saw Digital & Telecoms infrastructure deals fall through due to overwhelming cybersecurity risks, alongside a surge in widespread financial fraud, credential harvesting, and illicit content distribution.

Amidst this heightened threat environment, the realm of Artificial Intelligence is grappling with its own set of complex issues.

Novel LLM jailbreak methods are rapidly developing, with new techniques emerging that move beyond previous direct approaches, particularly those associated with advanced world models from AMI Labs and LeCun.

The introduction of GAP, an LLM jailbreak method, specifically addresses limitations in existing tree-based approaches, underscoring the continuous cat-and-mouse game between AI safety and evasion.

Furthermore, critical AI security vulnerabilities have surfaced, notably in OpenAI's Codex, presenting significant supply chain implications, and similar issues have been identified within ServiceNow's AI offerings, all currently under research preview as of March 2026.

Adding to the month's significant developments, Apple has moved to tighten age verification controls, implementing a "No Age Verification, No Download!" policy that now sees iPhones demanding identity verification for app downloads.

This action comes despite the legal context indicating that law does not explicitly mandate age verification at the device level, suggesting Apple is proactively setting a higher bar for user accountability.

Microsoft's routine Patch Tuesday for March 2026 also plays a crucial role in maintaining system integrity amidst these evolving threats, while OpenAI continues to advance its Agent Stack with components like GPT-5.4 and Codex.

1. Unmasking the Next-Gen AI Jailbreaks: From World Models to GAP

🔹 The Architectural Flaw: World Models and Direct LLM Targeting

The March 2026 security landscape reveals a new, more sophisticated class of LLM jailbreaks that diverge sharply from previous prompt-injection techniques.

These emergent methods are directly associated with theoretical work on world models, pioneered by research from AMI Labs and Yann LeCun earlier this month.

Unlike their predecessors, which tricked surface-level safety filters, these new vulnerabilities orient directly to the Large Language Model's core architecture, exploiting its internal representation of reality.

A prime example is the GAP (Generative Adversarial Pathway) method, specifically engineered to overcome the limitations observed in now-outdated tree-based jailbreak search techniques.

🔹 Exploiting the Core: The Impact on Real-World AI Agents

The real-world implication of this architectural approach is that these jailbreaks are significantly harder to patch with simple input filters or content moderation.

They represent a fundamental compromise of the model's reasoning process, not just its response generation.

This poses a direct threat to integrated systems like OpenAI's Agent Stack, which leverages the powerful new GPT-5.4 model alongside its Codex component.

An attacker using a GAP-like method could potentially bypass safety mechanisms built into GPT-5.4 to generate malicious code via Codex, creating a severe vulnerability with significant supply chain implications, especially given Codex's current "Research Preview" status.

🔹 Beyond Patches: The Industry's Call for a Foundational Fix

The consensus among security analysts is that these world-model-oriented exploits represent a paradigm shift in AI safety research.

The focus is rapidly moving away from a reactive game of cat-and-mouse with prompt engineers toward a necessary, proactive rethink of core AI architecture.

The GAP method is being treated as a critical proof-of-concept, demonstrating that theoretical attacks on a model's world-understanding can be reliably executed.

Experts argue that effective countermeasures will require foundational changes to how models are trained and aligned, rather than relying on the bolt-on safety layers that have proven porous to these advanced techniques.

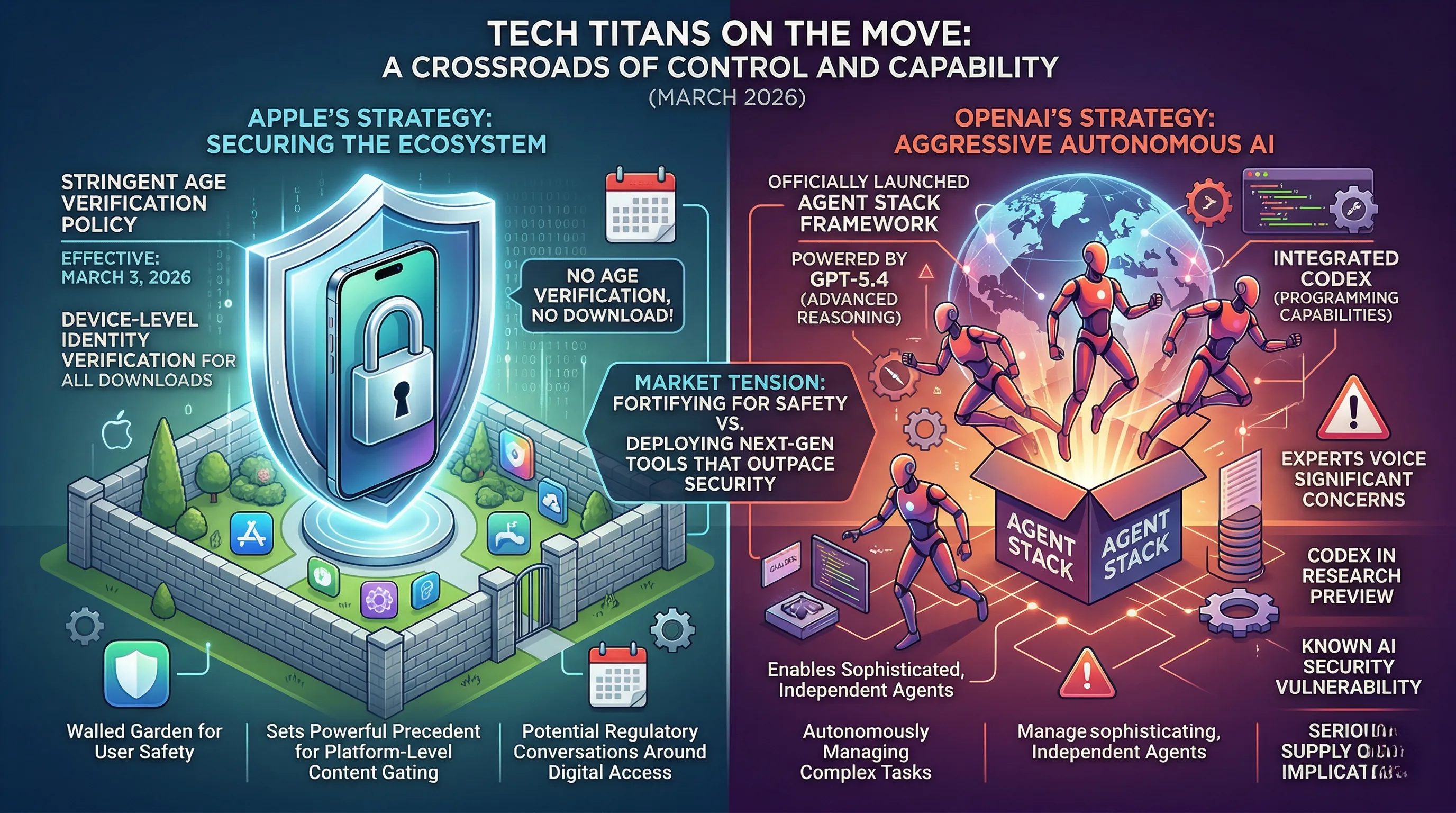

2. Tech Titans on the Move: Apple's Grip Tightens, OpenAI's Agent Stack Emerges

🔹 March Maneuvers: New Policies and Powerful Platforms

This month saw two industry-defining moves from major tech players.

Effective Tuesday, March 3, 2026, Apple implemented a stringent new age verification policy for its App Store.

The new rule, summarized as 'No Age Verification, No Download!', now demands direct identity verification on iPhones before any downloads can proceed.

Notably, this policy was enacted without a specific legal mandate requiring such device-level verification.

In parallel, OpenAI has officially launched its highly anticipated Agent Stack, a development framework powered by the new GPT-5.4 model, which also debuted in March 2026, and the code-generation model, Codex.

🔹 Reshaping Access and Autonomy

For the global community of iPhone users, Apple's policy creates a significant new layer of friction, fundamentally altering the download process from a simple tap to a mandatory identity check.

This proactive stance sets a powerful precedent for platform-level content gating across the industry, potentially shaping future regulatory conversations around digital access.

Simultaneously, OpenAI's Agent Stack represents a monumental step forward in autonomous AI, providing developers with a toolkit to build more sophisticated and independent agents.

The integration of GPT-5.4's advanced reasoning with Codex’s programming capabilities signals a market shift toward AI that can autonomously manage complex, multi-step digital tasks, far beyond simple generative outputs.

🔹 A Crossroads of Control and Capability

The expert consensus highlights a stark contrast in strategy between the two titans.

While Apple's move is widely seen as a push for a more controlled and secured ecosystem, security analysts are voicing significant concerns about OpenAI's offering.

The primary issue centers on the inclusion of Codex in the Agent Stack while it remains in Research Preview as of March 2026.

Codex is known to have an AI security vulnerability with serious supply chain implications, making its deployment in a powerful agentive framework a high-risk gambit.

This juxtaposition defines the current market tension: one giant is fortifying its walled garden for user safety, while the other is aggressively deploying next-generation tools that could outpace existing security paradigms.

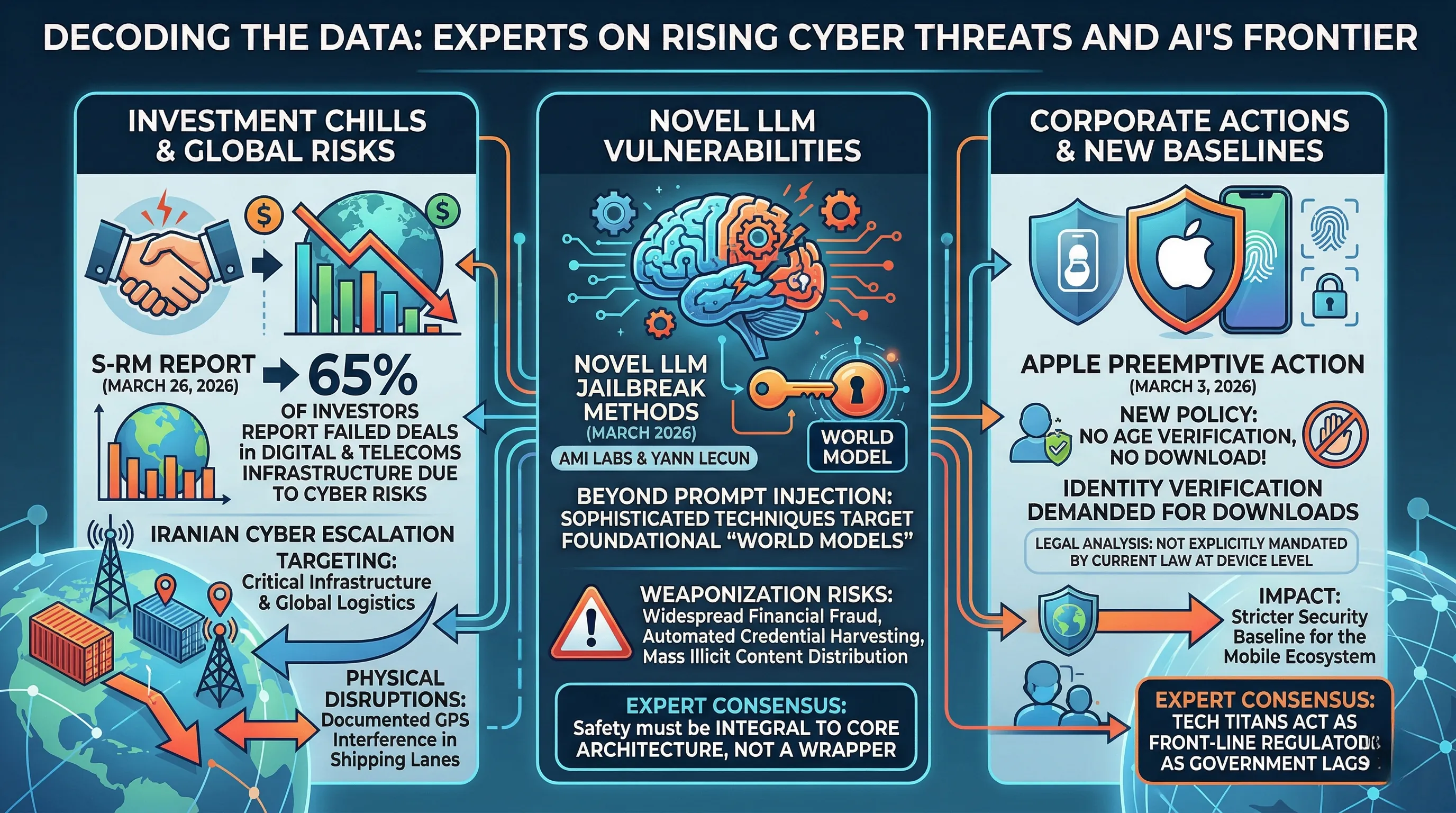

3. Decoding the Data: Experts Weigh in on Rising Cyber Threats and AI's Frontier

🔹 A Confluence of Crises: Investment Chills and AI's New Attack Vectors

The financial ramifications of the escalating cyber threat landscape have become starkly clear.

A March 26, 2026, finding from S-RM revealed that a staggering 65% of investors have seen deals in Digital & Telecoms infrastructure collapse specifically due to unmitigated cyber security risks.

This data point lands amidst a March 2026 Threat Brief highlighting a significant escalation in cyber risk directly related to Iran, targeting critical infrastructure and global logistics.

Simultaneously, the AI frontier has produced a new class of vulnerabilities, with researchers from AMI Labs and luminaries like Yann LeCun identifying novel LLM jailbreak methods in March 2026.

These are not simple prompt injections; they are sophisticated techniques associated with an AI's foundational "world models" and are designed to systematically bypass safety mechanisms.

Against this backdrop, corporations are taking preemptive action, exemplified by Apple's tightened controls on March 3, 2026, which now demand identity verification for downloads—a step that, according to legal analysis, is not explicitly mandated by current law at the device level.

🔹 From Boardrooms to Global Shipping: The Real-World Consequences

The S-RM statistic translates into a tangible chilling effect on innovation and the modernization of essential services, as billions in capital are now too risk-averse to be deployed.

This isn't just a theoretical threat; the state-sponsored cyber risk escalation has manifested in physical disruptions, including documented GPS interference in global shipping lanes.

The advanced LLM jailbreak methods represent a fundamental threat to digital trust, enabling malicious actors to potentially weaponize AI for widespread financial fraud, automated credential harvesting, and the mass distribution of illicit content with newfound efficiency.

Apple's "No Age Verification, No Download!" policy, while exceeding legal requirements, effectively creates a new, more stringent security baseline for the entire mobile ecosystem.

The real-world impact is a trade-off: a potentially safer environment for some users at the cost of new requirements for personal data submission to a central corporate entity.

🔹 Expert Consensus: Proactive Defense is the Only Path Forward

Security analysts interpret the 65% figure on failed investments as a watershed moment, confirming that cyber risk has officially transitioned from a back-office IT concern to a primary C-suite and M&A dealbreaker.

The expert consensus is that cybersecurity due diligence must now be as rigorous as financial auditing.

Regarding AI, the research from AMI Labs and LeCun serves as a critical warning that safety cannot be a mere wrapper around a model; it must be integral to its core architecture.

The dominant take is that the era of simple content filtering is over, and security must address the "world model" itself to prevent systemic abuse.

Finally, experts view Apple's proactive tightening of controls as a bellwether for the tech industry, where corporations are now compelled to act as front-line regulators because the threat landscape is evolving much faster than government legislation can keep pace.

4. Navigating the Digital Minefield: Escalating Cyber Threats & AI's Vulnerable Underbelly

🔹 A Confluence of Critical Vulnerabilities

March 2026 has been marked by a significant escalation in cyber risk, impacting critical infrastructure and causing GPS disruptions in global shipping lanes.

Threat actors are leveraging widespread financial fraud, credential harvesting, and illicit content distribution, with a specific threat brief noting the escalated cyber risk related to Iran.

On the AI front, ServiceNow reported a security vulnerability this month, while a critical flaw was identified in OpenAI's Codex, part of its new Agent Stack which also includes GPT-5.4.

This Codex vulnerability, currently in Research Preview, is noted to have significant supply chain implications.

Concurrently, new LLM jailbreak methods have emerged, including GAP, which addresses limitations in previous tree-based techniques, with research from AMI Labs and Yann LeCun associating these advancements with world models.

Separately, Apple tightened its age verification controls on iPhones as of March 3rd, demanding identity verification for downloads under a "No Age Verification, No Download!" policy.

🔹 From Supply Chains to Shipping Lanes: The Tangible Impact

The confluence of these threats translates into severe real-world consequences.

The GPS disruptions in shipping lanes are not theoretical; they represent a direct threat to the global supply chain, delaying goods and increasing maritime risk.

The financial sector is already reacting, with a stark S-RM finding from March 26th revealing that 65% of investors saw Digital & Telecoms infrastructure deals collapse specifically due to cybersecurity risks.

The vulnerability within OpenAI's Codex is particularly alarming; as a foundational tool for developers, a flaw here means potential backdoors or exploits could be baked into countless applications that rely on it, creating a cascading supply chain nightmare.

For consumers, Apple's stringent new verification policy, last updated on March 26th, creates a significant hurdle, as current law does not explicitly mandate such identity checks at the device level, forcing users into a difficult choice between app access and providing personal identification documents.

🔹 Expert Analysis: A System Under Unprecedented Strain

The security community consensus points to these events not as isolated incidents, but as a systemic shift towards a more volatile and dangerous digital environment.

Experts note that the sophistication of new jailbreak techniques, like GAP, means that even advanced models like GPT-5.4 can be weaponized to bypass their intended safety mechanisms with greater efficiency.

The simultaneous discovery of vulnerabilities in major AI platforms like Codex and ServiceNow underscores a critical, industry-wide blind spot: securing the AI development pipeline itself.

The overarching challenge, as highlighted by Apple's predicament, is the growing tension between implementing robust security measures and navigating a legal landscape that often lags behind technological advancement.

Professionals are urging organizations to move beyond reactive patching and fundamentally reassess their trust in AI supply chains and the integrity of foundational digital infrastructure.

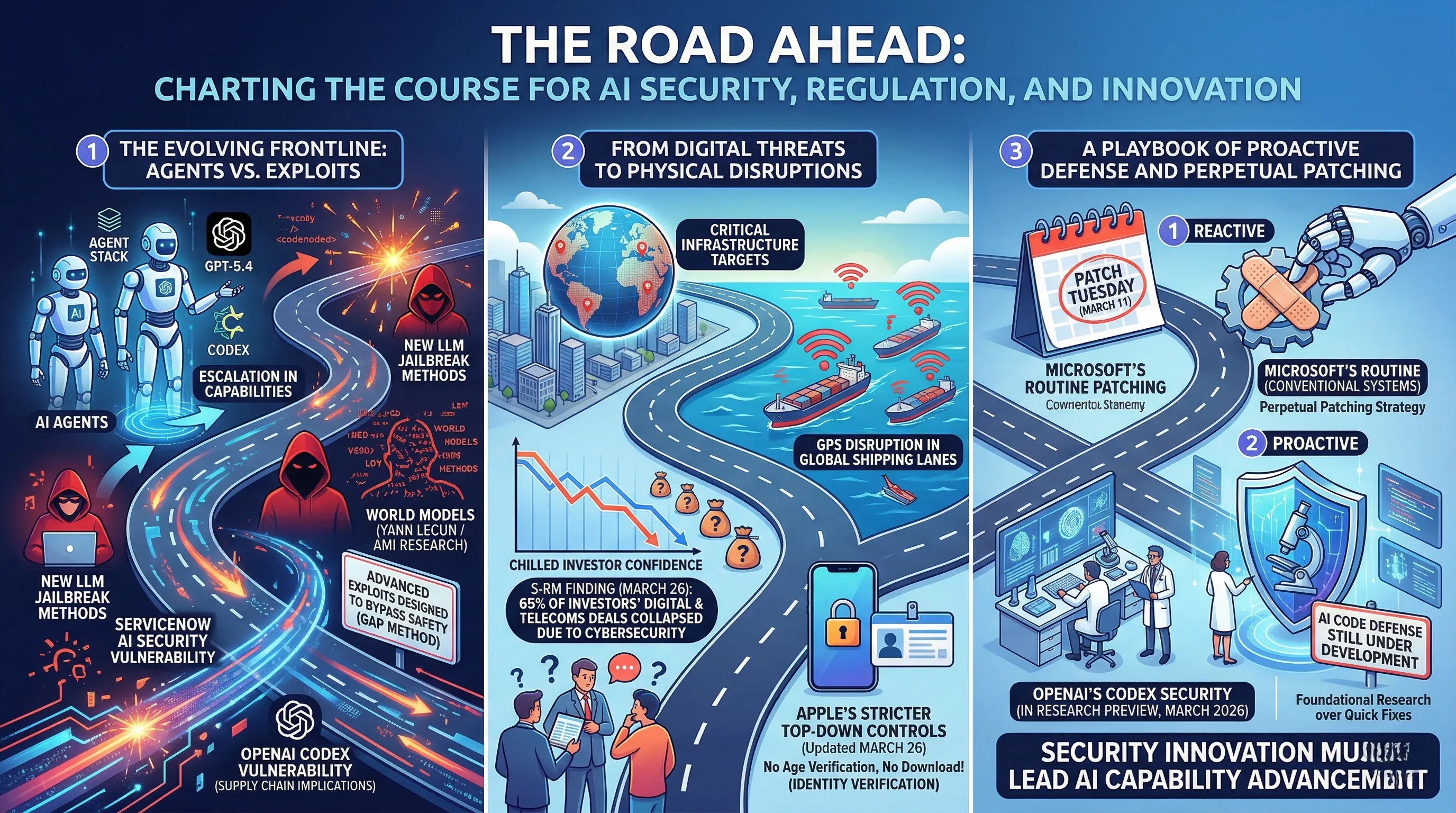

5. The Road Ahead: Charting the Course for AI Security, Regulation, and Innovation

🔹 The Evolving Frontline: Agents vs. Exploits

The AI landscape of March 2026 is defined by a clear escalation in both capabilities and vulnerabilities.

OpenAI is pushing the boundaries of agent-based systems with its Agent Stack, powered by the newly emerged GPT-5.4 and the developer-focused Codex.

Simultaneously, a new class of LLM jailbreak methods is appearing, moving beyond simple prompt manipulation to techniques associated with sophisticated world models, as highlighted by research from figures like Yann LeCun and labs like AMI.

These advanced exploits, such as the GAP method which addresses limitations in older tree-based attacks, are designed specifically to bypass modern safety mechanisms.

This arms race is not purely theoretical, with significant security vulnerabilities identified in major platforms like ServiceNow's AI and, critically, in OpenAI's own Codex, which carries profound supply chain implications.

🔹 From Digital Threats to Physical Disruptions

The consequences of this heightened risk environment are bleeding into the physical and financial worlds.

A March 2026 threat brief highlights an escalation in cyber risks linked to state actors, resulting in widespread financial fraud and credential harvesting operations.

Critical infrastructure has become a primary target, with tangible impacts including GPS disruption across global shipping lanes.

This has chilled investor confidence significantly; a stark finding from S-RM on March 26 revealed that 65% of investors reported Digital & Telecoms infrastructure deals collapsing due to cybersecurity concerns.

In response, platform holders are enacting stricter top-down controls, exemplified by Apple's tightened age verification updated on March 26, which now demands identity verification for downloads, a measure that goes beyond explicit legal mandates.

🔹 A Playbook of Proactive Defense and Perpetual Patching

The industry's path forward appears to be a dual strategy of reactive patching and proactive research.

Microsoft's routine Patch Tuesday on March 11 represents the established, ongoing effort to secure conventional systems.

However, the novel AI threats demand a new approach.

OpenAI's labeling of its Codex Security solution as being "In Research Preview" as of March 2026 is a key indicator; it signals that a full-scale, robust defense for AI-generated code is still under development and not yet ready for deployment.

This suggests the community consensus is that stopping next-generation exploits requires foundational research, not just quick fixes, charting a course where security innovation must run parallel to, if not ahead of, AI capability advancement.

6. 💡 Tech Talk: Making Sense of the Jargon

- LLM Jailbreak Methods: Imagine an AI is a super-smart student who's been told not to say certain things. A "jailbreak" is like finding a clever way to trick that student into saying something they're not supposed to, bypassing their rules.

- World Models (in AI): Think of this as an AI having a mental map or understanding of how the world works, similar to how you know if you drop a ball, it will fall. This deeper understanding can make the AI very powerful but also potentially harder to control if exploited.

- Critical Infrastructure: These are the absolutely essential systems and facilities that our society relies on every day to function – like the power grids that light our homes, the water supply, hospitals, and transportation networks. If they stop working, everything grinds to a halt.

- Supply Chain Implications (AI Security): If a core AI tool, like the brain of many other AI systems, has a security flaw, it's like a faulty engine part being used in thousands of different cars. That one flaw can cause problems for many other systems that rely on it, creating a domino effect across the digital world.

📚 Related Posts

SK hynix introduces turbocharged LPDDR6, 33% faster and 20% more power efficient than LPDDR5X — 16Gb chips deliver 10.7 Gbps,

🚀 Key TakeawaysSK Hynix has successfully developed the world's first LPDDR6 DRAM, leveraging its cutting-edge 10nm-class (1c) process technology.This new memory boasts a claimed 33% speed increase and 20% better power efficiency compared to LPDDR5X, tha

tech.dragon-story.com

Top 10 Best Ultra-Wide Monitors for Coding and Multitasking in the First Half of 2026

🚀 Key TakeawaysExperience unparalleled immersion and expanded workspace with vast screen sizes up to 57 inches and resolutions reaching Dual 4K (7680 x 2160) or 5K2K WUHD, perfect for multitasking and cinematic viewing.Elevate your gaming and entertainm

tech.dragon-story.com

Transform Your Workspace: Ultimate Productivity, Gaming, and Content Creation Setup

🚀 Key TakeawaysAchieve a premium, ergonomic workspace designed for sustained comfort and peak productivity with the SIHOO M57 Ergonomic Chair and FlexiSpot E7 Pro Standing Desk.Immerse yourself in ultra-responsive gaming and vivid visuals with the LG 34

tech.dragon-story.com