🚀 Key Takeaways

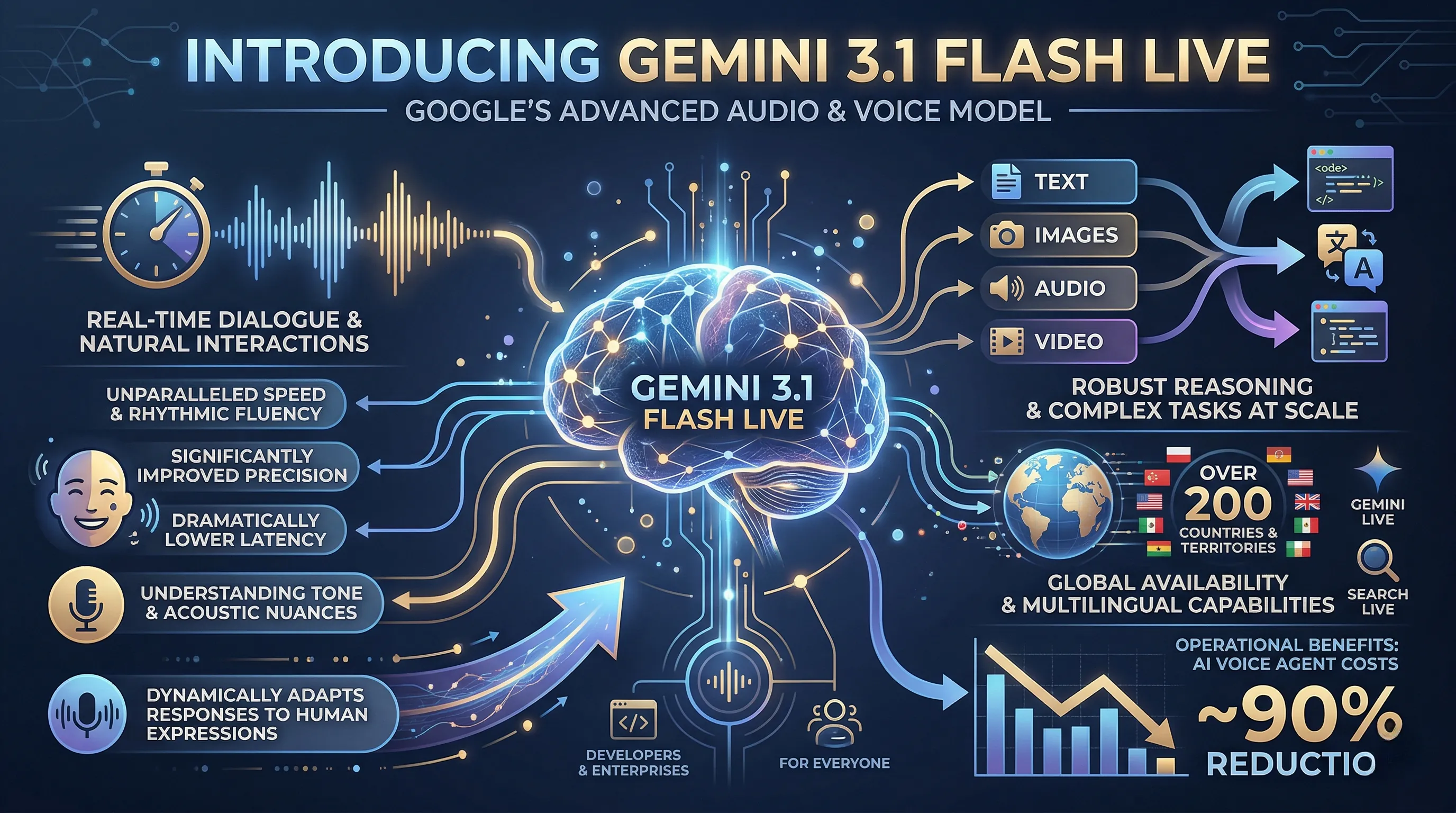

- Gemini 3.1 Flash Live is Google's highest-quality audio and voice model, engineered for real-time, natural dialogue and exceptionally reliable voice-first AI interactions.

- It features unprecedented tonal understanding, dynamically adjusting responses to acoustic nuances like pitch and pace, making conversations feel profoundly human-like and intuitive.

- The model offers improved precision and significantly lower latency, robustly handling complex tasks at scale and slashing AI voice agent costs by an estimated 90%.

- With inherent multilingual capabilities, global availability in over 200 countries, and comprehensive input understanding across text, images, audio, and video, it includes SynthID watermarking for enhanced safety and authenticity.

Introducing Gemini 3.1 Flash Live, Google's latest and most advanced audio and voice model, meticulously designed to revolutionize real-time dialogue and foster truly natural, voice-first AI interactions.

This cutting-edge model delivers the unparalleled speed and rhythmic fluency essential for the next generation of conversational AI, setting a new standard for how we interact with technology.

At its core, Gemini 3.1 Flash Live boasts significantly improved precision and dramatically lower latency, making voice interactions smoother and more responsive than ever before.

It excels at understanding tone and recognizing subtle acoustic nuances, allowing it to dynamically adapt its responses to human expressions, ensuring conversations feel genuinely intuitive and human-like.

Beyond natural chat, it demonstrates robust reasoning capabilities, efficiently executing complex tasks at scale across various inputs including text, images, audio, and video.

This powerful model is not just for developers and enterprises; it enhances experiences for everyone through Gemini Live and Search Live, now available in over 200 countries and territories due to its inherent multilingual capabilities.

Furthermore, Gemini 3.1 Flash Live promises substantial operational benefits, including a remarkable ~90% reduction in AI voice agent costs, making advanced voice AI more accessible and efficient for businesses worldwide.

1. The Dawn of Voice-First AI: Unpacking Gemini 3.1 Flash's Breakthrough Features

🔹 Beyond the Transcript: The New Audio Intelligence Engine

Gemini 3.1 Flash is architected from the ground up as a native speech-to-speech model, engineered for real-time, voice-first dialogue.

Its core capabilities include a dramatic reduction in latency and a sharp increase in precision for voice interactions.

The model now comprehends a vast array of inputs, including text, images, audio, and video, while processing continuous data streams from sources like webcams and screen sharing.

Crucially, it boasts an improved tonal understanding, allowing it to recognize subtle acoustic nuances like pitch and pace in a user's voice.

This is coupled with robust reasoning, multi-step tool-calling, and inherent multilingual support, setting a new technical benchmark for the industry.

🔹 From Clunky Commands to Fluid Conversation

This isn't just about faster responses; it's about creating interactions that feel genuinely human and reliable.

Imagine an AI that doesn't just hear your words but understands your frustration or confusion from your tone and dynamically adjusts its support in real-time.

For developers and enterprises, this means building voice agents that can handle complex tasks in noisy, real-world environments without constant failure, a game-changer for customer experience.

The ability to follow a conversation thread for twice as long means you won't have to repeat yourself, making interactions incredibly efficient and natural.

You can now have a fluid conversation with an AI, share your screen to show it a problem, and have it execute a solution through tool-calling, all without ever touching your keyboard.

🔹 Industry Validation: Proof in Performance

The leap forward is quantified by its leading 90.8% score on the ComplexFuncBench for audio, showcasing its mastery of multi-step tasks.

Leading companies like Verizon and The Home Depot are already reporting significantly more natural and effective conversational workflows with their customers.

With an imperceptible SynthID watermark embedded in all generated audio, Google is also building a foundation of trust and helping to prevent misinformation.

Ultimately, Gemini 3.1 Flash delivers the speed, natural rhythm, and contextual awareness that finally makes the promise of a true voice-first AI feel achievable.

2. Silent Shifts: What's NOT Changing (and Why It's Good)

🔹 The Unspoken Stability: A Focus on Addition

Scouring the update notes for Gemini 3.1 Flash reveals a powerful story not in what’s listed, but in what’s absent.

There are no announcements of deprecated features, no warnings about breaking API changes, and no mention of removed functionalities.

Instead, the entire narrative is one of enhancement and augmentation—a clear signal that the core architecture users and developers rely on remains intact.

Every metric points to building upon the existing foundation, from improved precision in voice interactions to benchmark scores like the 90.8% on ComplexFuncBench which simply "leads compared to previous model."

🔹 Building Without Breaking: The Developer's Dream Update

This focus on additive innovation translates into one of the most valuable resources for any developer: predictability.

It means you can integrate the more natural, low-latency voice capabilities without rewriting your existing application from the ground up.

Your current voice-first agents won't suddenly fail; they will simply benefit from the underlying improvements, like better conversation retention and tonal understanding.

This creates a seamless upgrade path, allowing teams to adopt powerful new tools without the typical headache and resource drain of a major refactor.

🔹 The Confidence of Continuity: A Mature Ecosystem

This isn't a sign of stagnation; it's a mark of maturity and confidence in the platform.

By choosing to enhance rather than overhaul, Google is showing it respects the investment made by its developer community and enterprise partners.

It ensures that companies who have built complex workflows can adopt the latest improvements for a more natural user experience without risking their current operational stability.

This strategy fosters trust and encourages adoption, signaling that the Gemini ecosystem is a reliable foundation for long-term projects, not a volatile environment of constant, disruptive shifts.

3. Beyond Speed: Unveiling Gemini 3.1 Flash's Performance Leaps & Stealthy Security

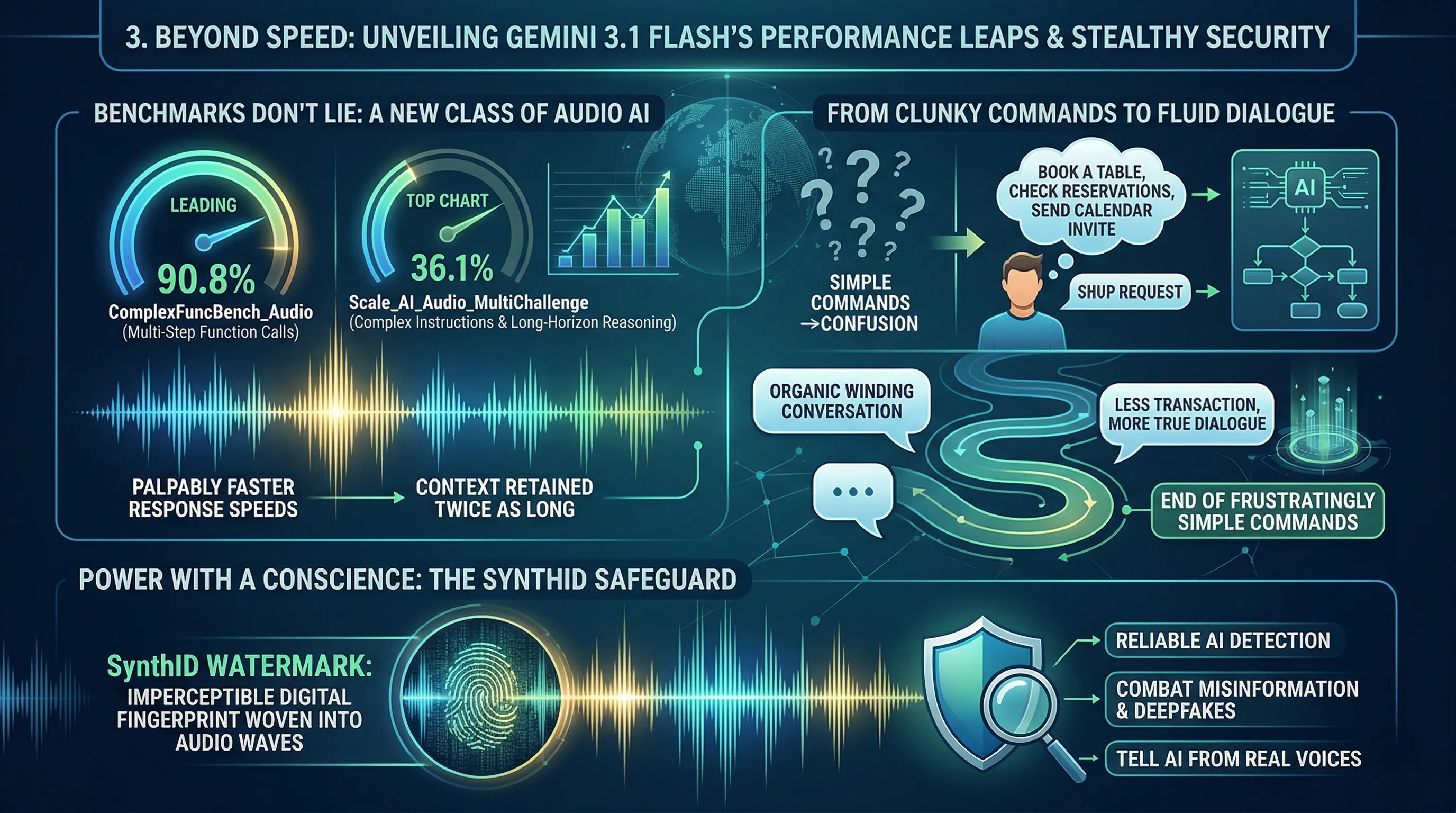

🔹 Benchmarks Don't Lie: A New Class of Audio AI

The performance gains in Gemini 3.1 Flash are not just incremental; they represent a fundamental upgrade in cognitive ability for audio interactions.

It achieves a leading 90.8% score on the ComplexFuncBench_Audio benchmark, specifically showcasing its dominance in understanding and executing multi-step function calls.

Furthermore, it tops the charts with a 36.1% on the Scale_AI_Audio_MultiChallenge, a test that pushes the model's ability for complex instruction following and long-horizon reasoning.

Within the Gemini Live experience, this translates to palpably faster response speeds and the ability to retain conversational context for twice as long as the previous model.

🔹 From Clunky Commands to Fluid Dialogue

This leap in benchmark scores has a massive real-world impact, effectively ending the era of frustratingly simple voice commands.

The high score in multi-step function calling means you can finally speak to your AI assistant naturally, asking it to "Book a table for two at that new Italian place, check for reservations around 8 PM, and send me a calendar invite" all in one go, without the system getting lost.

The ability to follow complex instructions means the AI isn't just waiting for keywords; it's genuinely processing the logic behind your requests.

And with conversation retention doubled, you can have a winding, organic conversation, knowing the AI remembers what you were discussing five minutes ago, making interactions feel less like a transaction and more like a true dialogue.

🔹 Power with a Conscience: The SynthID Safeguard

With this new level of conversational realism comes a profound responsibility to ensure authenticity.

Google is addressing this head-on by embedding SynthID watermarking into all generated audio output.

Think of SynthID as a digital fingerprint, imperceptible to the human ear but woven directly into the audio waves.

This groundbreaking feature allows for the reliable detection of AI-generated content, providing a critical tool to combat misinformation and malicious deepfakes, ensuring that as AI voices become more human, we always have a way to tell them apart from the real thing.

4. Conversational Intuition: How Gemini 3.1 Flash Elevates User Interaction

🔹 Beyond Words: Decoding the Rhythm of Speech

Gemini 3.1 Flash is engineered from the ground up for the nuances of human dialogue.

Its core advancements focus on dramatically improved precision and lower latency for voice interactions, essentially closing the awkward gap between when you finish speaking and when the AI responds.

The model is now significantly faster and better at understanding vocal tone, recognizing subtle acoustic cues like pitch, pace, and volume.

Crucially, it can dynamically adjust its own responses based on user expressions like frustration or confusion, and it can follow a single conversation thread for twice as long as its predecessor.

🔹 From Clunky Commands to Fluid Conversation

This isn't just about faster answers; it's about better conversations.

The lower latency and tonal understanding transform interactions from a stilted, command-and-response sequence into a natural, flowing dialogue.

Imagine asking your AI for help with a complex task and sighing in frustration; instead of ploughing ahead, Gemini 3.1 Flash can recognize that cue and might offer to break the problem down differently.

This ability to understand the *feeling* behind your words creates a far more intuitive and helpful experience, whether you're using Gemini Live or the new global Search Live feature.

Because it retains context for longer, you no longer have to constantly repeat yourself, allowing for complex, multi-step problem-solving that feels less like programming a machine and more like collaborating with a partner.

🔹 The Expert Take: A More Human-Centric AI

The consensus is that these upgrades mark a pivotal shift from a voice *interface* to a true voice *partner*.

This is the kind of natural rhythm and intuitive response that developers and enterprises have been waiting for to build the next generation of truly helpful voice-first AI agents.

Industry giants like Verizon and The Home Depot are already providing positive feedback, highlighting how the model's more natural conversational abilities are improving their workflows.

The result is a more intuitive, reliable, and fundamentally more human experience for everyone, delivering on the promise of an AI that doesn't just hear your words, but understands your intent.

5. Revolutionizing Real-world AI: Gemini 3.1 Flash's Transformative Workflow Power

🔹 The Blueprint for Accessible AI: Power, Price, and Pipelines

Gemini 3.1 Flash is engineered for scaled, real-world deployment, enabling robust reasoning and complex task execution through capabilities like tool-calling, webcam access, and screen sharing integration.

Its true disruptive power, however, lies in its economic accessibility, slashing AI voice agent costs by an astonishing ~90%.

This isn't just a lab experiment; it's being deployed through a multi-tiered strategy targeting every user segment.

Developers gain access via the Gemini Live API in Google AI Studio, enterprises can integrate it through Gemini Enterprise for Customer Experience, and the general public interacts with it daily via Search Live and Gemini Live.

🔹 From Frustrated Customers to Global Queries: The Real-World Impact

This isn't just about making bots talk; it's about making them understand and react.

For enterprises, this means building voice agents that can operate effectively in noisy environments and recognize acoustic nuances like a customer's rising frustration, dynamically adjusting their response for a more natural, empathetic interaction.

Developers can now build sophisticated, voice-first agents that complete complex, multi-step tasks at scale without worrying about prohibitive operational costs.

For everyday users, the model’s inherent multilingual capability is fueling the global expansion of Search Live to over 200 countries and territories, making advanced, conversational search a worldwide reality.

🔹 The Enterprise Seal of Approval: Early Adopters Report a Paradigm Shift

Industry giants are already validating the model's impact on their workflows.

Companies like Verizon, LiveKit, and The Home Depot are reporting significant improvements, praising the enhanced, natural conversational flow in their customer and internal systems.

This positive feedback signals a critical transition from experimental AI to mission-critical business infrastructure.

The combination of advanced conversational intelligence and radical cost efficiency is empowering businesses to move beyond simple chatbots and deploy truly helpful AI assistants that fundamentally improve their operational DNA.

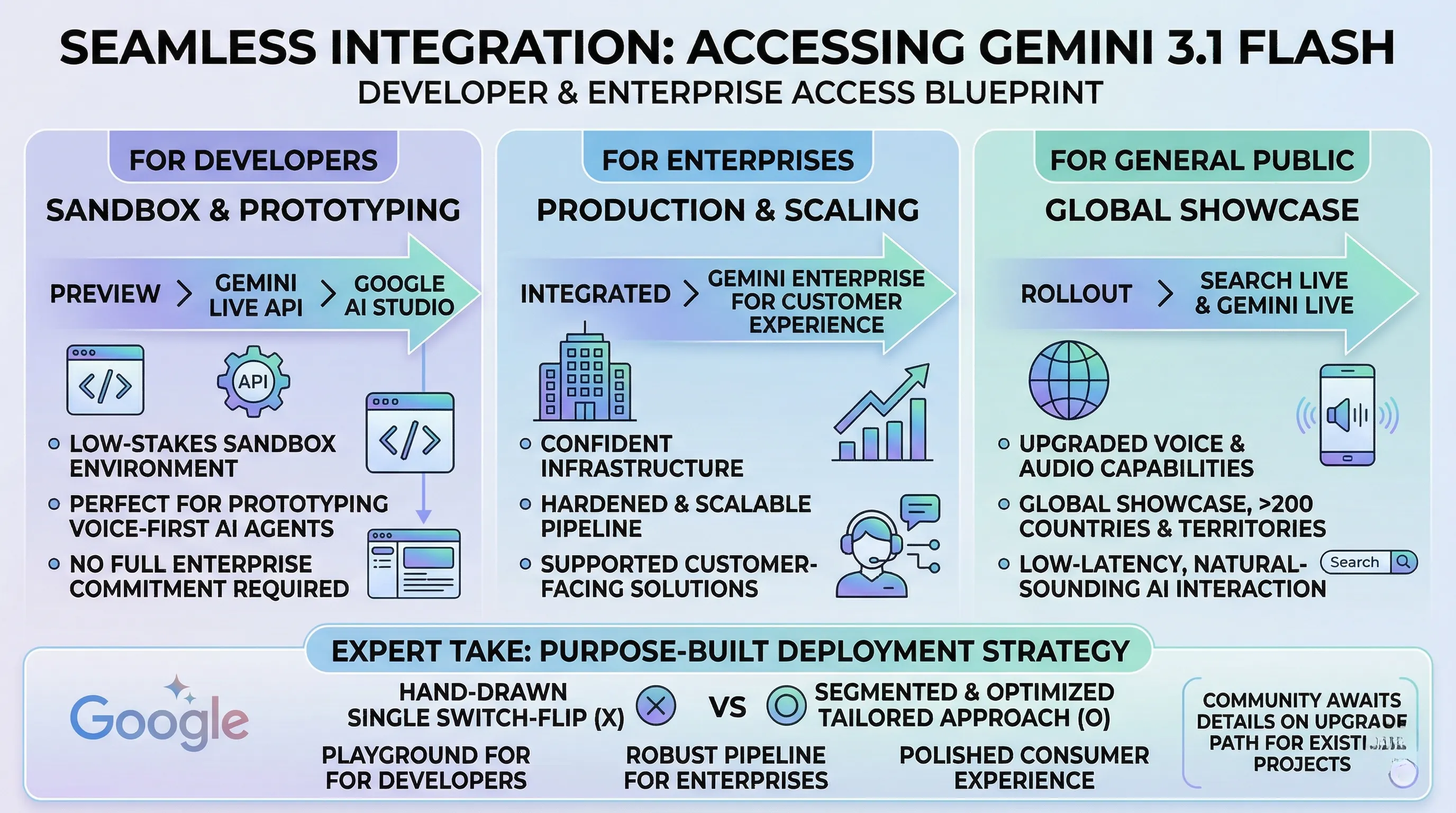

6. Seamless Integration: Accessing Gemini 3.1 Flash for Developers & Enterprises

🔹 The Access Blueprint: A Multi-Channel Rollout

Google has defined three distinct pathways for accessing Gemini 3.1 Flash, catering to different user segments.

For developers eager to experiment, the model is available as a preview through the Gemini Live API directly within Google AI Studio.

Enterprises looking to deploy this technology at scale will find it integrated into Gemini Enterprise for Customer Experience.

Finally, for the general public, the upgraded voice and audio capabilities are being rolled out through Search Live and Gemini Live.

Notably, the provided information outlines these access points but does not include explicit migration steps or guidance for developers currently using previous models.

🔹 From Sandbox to Production: Tailored On-Ramps

This segmented approach is incredibly strategic, removing friction for each user type.

Giving developers API access in AI Studio creates a low-stakes sandbox environment, perfect for prototyping the next generation of voice-first AI agents without requiring a full enterprise commitment.

The dedicated enterprise channel suggests a more hardened, scalable, and supported pipeline, ensuring that companies building customer-facing solutions can do so with confidence in the infrastructure.

By pushing the model to everyone via Search Live across more than 200 countries and territories, Google is not just updating a product; it's creating a global showcase for what low-latency, natural-sounding AI interaction feels like.

🔹 Expert Take: A Purpose-Built Deployment Strategy

The rollout strategy for Gemini 3.1 Flash isn't a simple switch-flip; it's a carefully considered plan to maximize adoption and impact.

Instead of a one-size-fits-all solution, Google provides a developer playground, a robust enterprise pipeline, and a polished consumer experience.

This ensures that whether you're building a complex, multi-step voice agent for a client or just asking Search a question, you're interacting with the model through a channel optimized for your needs.

The key takeaway is that access is clearly defined by user role, even as the community awaits further details on the upgrade path for existing projects.

7. Industry Acclaim: Early Feedback and the Road Ahead

🔹 Enterprise Titans Signal Early Approval

Initial reports from industry heavyweights are painting a very positive picture for Gemini 3.1 Flash Live's real-world performance.

Companies including Verizon, LiveKit, and The Home Depot have provided early feedback, specifically praising the model's capacity for more natural and improved conversations within their operational workflows.

This endorsement from major enterprise players suggests the model is already meeting its goal of enhancing customer experience at a massive scale.

🔹 The End of Robotic Customer Service?

This isn't just about a slightly better chatbot; it's about fundamentally changing the nature of automated interaction.

For a customer contacting Verizon or The Home Depot, this translates to an AI that doesn't just hear words but understands tone, pace, and nuance, leading to less frustration and faster resolutions.

For developers using platforms like LiveKit, it means having the tools to build voice-first agents that can handle complex, multi-step tasks in noisy, real-world environments without sounding robotic or getting easily confused.

The result is a more intuitive and human-like experience that could finally move enterprise AI beyond the rigid, frustrating phone trees of the past.

🔹 A Promising Start, But The Jury's Still Out

While this curated feedback from enterprise partners is a powerful validation of the technology's potential, it represents a controlled first look.

The provided data does not yet contain wider sentiment from the independent developer community or a public log of discovered bugs and edge cases.

The road ahead will involve seeing how Flash Live performs in the wild, once it's stress-tested by a broader base of creators and users who will undoubtedly push its capabilities to their absolute limits.

8. 💡 Tech Talk: Making Sense of the Jargon

- Speech-to-Speech Model: Imagine talking to an AI that doesn't just write down what you say and then type back an answer, but actually listens to your voice and then *speaks* its reply directly.

It's like having a real-time voice conversation where both sides are speaking, not typing or reading. - Low Latency: Think about talking to someone without any awkward pauses or delays. "Low latency" means the AI responds super quickly, almost instantly, making the conversation flow naturally like talking to a real person.

- Acoustic Nuances: These are the subtle details in someone's voice – like whether they sound excited, tired, or confused (the pitch, pace, and tone).

The AI is smart enough to pick up on these tiny cues, just like a good listener would, to understand how you truly feel. - Multi-step Function Calling: Picture giving a smart assistant a complex chore, like "Order pizza for tonight, make sure it's pepperoni, and then set a reminder for me to pick it up at 7 PM."

Instead of just doing one thing, it can break down and complete a series of related tasks one after another, all based on your initial request. - Long-horizon Reasoning: This is like planning a whole trip rather than just the first leg.

The AI can remember what you said much earlier in a conversation and use that information to make smart decisions or continue a complex task over a longer period, keeping the bigger picture in mind. - SynthID Watermark: It's like a secret, invisible signature embedded directly into the AI's generated voice output.

You can't hear it, but a special tool can detect it, proving the audio was created by AI. This helps prevent misinformation and makes it easy to tell if something is AI-generated.

📚 Related Posts

Google's February AI Blitz: New Gemini Models, Creative Tools, and a Global Vision

🚀 Key TakeawaysGoogle's February AI Blitz unveiled a significant push for more capable and specialized AI, focusing on empowering both advanced problem-solving and creative expression across diverse applications.This included the release of Gemini 3.1 P

tech.dragon-story.com

Samsung Galaxy S26 Ultra: Redefining Flagship with Next-Gen AI and Privacy Display

🚀 Key TakeawaysExperience a revolution with next-gen AI features powered by Smarter Galaxy AI and One UI 8.5, promising an efficient and user-friendly intelligent experience.Enjoy unparalleled visual fidelity and security with a Built-in Privacy Display

tech.dragon-story.com

SK hynix introduces turbocharged LPDDR6, 33% faster and 20% more power efficient than LPDDR5X — 16Gb chips deliver 10.7 Gbps,

🚀 Key TakeawaysSK Hynix has successfully developed the world's first LPDDR6 DRAM, leveraging its cutting-edge 10nm-class (1c) process technology.This new memory boasts a claimed 33% speed increase and 20% better power efficiency compared to LPDDR5X, tha

tech.dragon-story.com