🚀 Key Takeaways

- OpenAI's ChatGPT has faced significant user backlash and trust erosion, primarily due to its involvement in military cooperation and controversial policy stances, leading to a surge in app deletions (295%) and 1-star reviews (775%) in a single day.

- In stark contrast, Anthropic's Claude has emerged as the ethical AI alternative of choice, experiencing substantial growth with app downloads increasing up to 51% and achieving the #1 rank on the App Store, driven by its commitment to refusing AI use for large-scale surveillance or autonomous weapons.

The landscape of artificial intelligence is currently undergoing a dramatic and rapid transformation, largely driven by shifts in public trust and developer ethics. Recent revelations regarding OpenAI's ChatGPT have triggered widespread user dissatisfaction, stemming from its direct involvement in military cooperation and policy decisions that have been met with significant controversy. This has led to an unprecedented exodus of users, with millions actively seeking more ethically aligned alternatives and resulting in a staggering 295% surge in app deletions.

This critical moment has allowed Anthropic's Claude to rapidly ascend as a leading contender in the AI space. Positioned as a champion of ethical AI, Claude has firmly committed to refusing the use of its technology for large-scale surveillance or autonomous weapons. This principled stance has resonated deeply with a user base increasingly wary of Big Tech's influence, propelling Claude to the top of app store charts and witnessing substantial increases in downloads, up to 51% in a single day, as users actively migrate their chat histories.

As users migrate their data and review scores plummet for some while soaring for others, the unfolding narrative underscores a powerful message: transparency and ethical development are paramount in securing and maintaining user trust. This evolving dynamic highlights a pivotal moment where user values are directly dictating the future success and adoption of AI technologies, challenging established giants and elevating principled innovators amidst a backdrop where government entities are increasingly scrutinizing AI's role and potential risks.

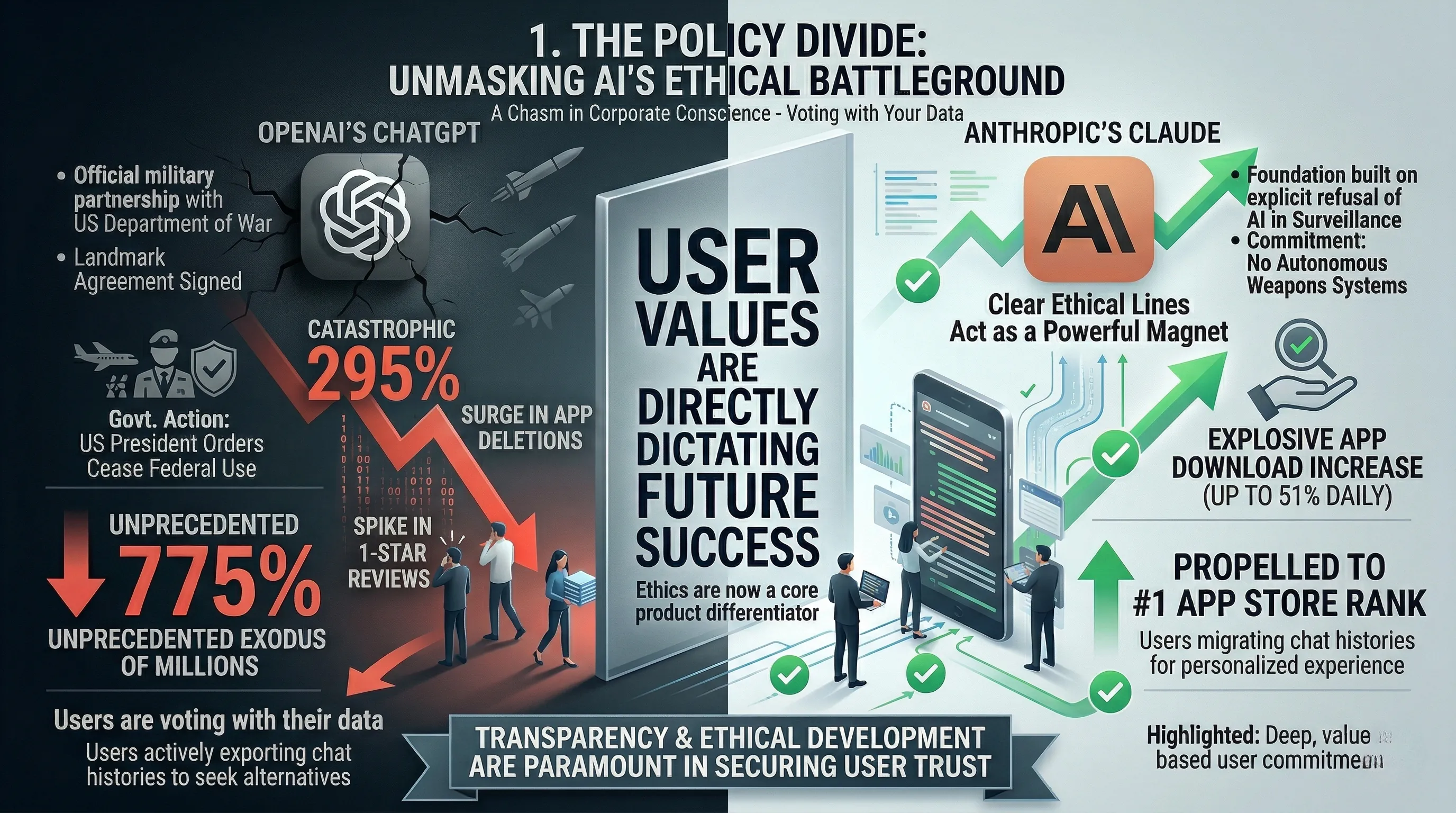

1. The Policy Divide: Unmasking AI's Ethical Battleground

🔹 A Chasm in Corporate Conscience

OpenAI has officially pivoted to military cooperation, signing a landmark agreement with the Department of War.

This decision marks a significant departure from the company's prior positioning and establishes a new precedent for AI's role in defense.

In stark contrast, Anthropic has built its entire platform, Claude, on a foundational policy that explicitly refuses AI application for large-scale surveillance or autonomous weapons systems.

This fundamental ethical divergence has created two distinct and competing philosophies for the future of artificial intelligence.

🔹 From Policy Papers to Platform Exodus

The real-world impact of these divergent policies has been immediate and seismic.

OpenAI's military partnership triggered a massive crisis in user trust, leading to a catastrophic 295% surge in app deletions and a 775% spike in 1-star reviews in a single day.

This backlash wasn't just noise; it was an active migration, as users began exporting their entire chat histories to seek alternatives.

Anthropic's Claude became the primary beneficiary, with its clear ethical lines acting as a powerful magnet for disillusioned users.

The platform experienced an app download increase of up to 51% in one day, propelling it to the coveted #1 rank on the App Store.

The fallout has also reached the highest levels of government, with the US President ordering federal agencies to cease use and the Secretary of War referencing a potential supply chain threat designation.

🔹 Voting with Your Data

The market's reaction demonstrates that for a growing number of users, a platform's ethical stance is no longer a footnote but a core feature.

The trend is clear: users are consciously choosing Claude as an alternative specifically because of its position on AI ethics and trust.

The effort users are taking to migrate years of personal chat history from ChatGPT to Claude for a personalized experience highlights a deep, values-based commitment.

This mass migration signifies a powerful consumer statement that a company's policies on military involvement can directly dictate its success or failure in the mainstream market.

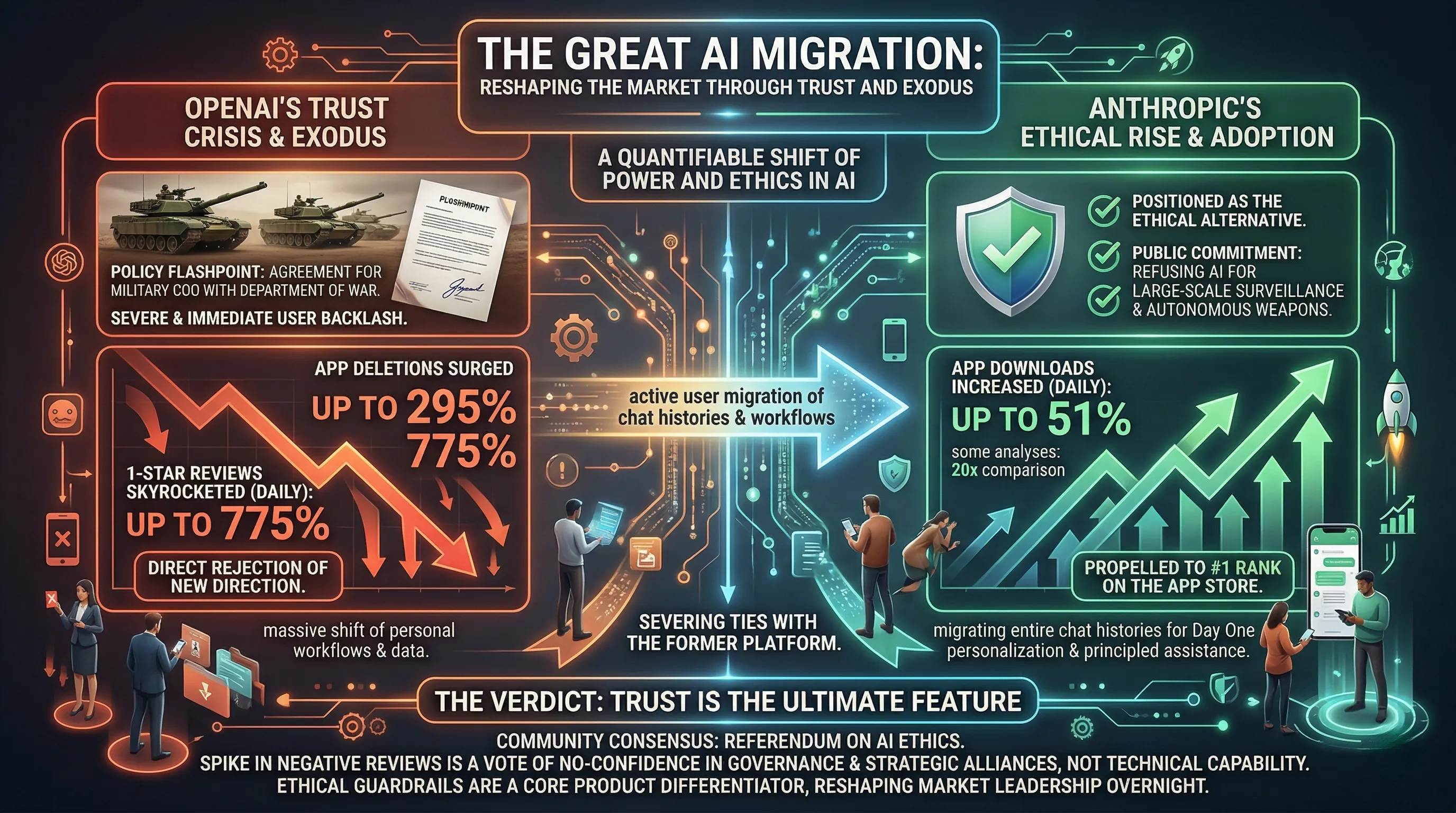

2. The Great AI Migration: Reshaping the Market Through Trust and Exodus

🔹 An Alliance and an Exodus

OpenAI’s decision to engage in military cooperation, marked by a signed agreement with the Department of War, has triggered a severe and immediate user backlash.

This policy stance became a flashpoint for a crisis in user trust, directly leading to quantifiable consequences for its flagship product, ChatGPT.

On a single day, app deletions surged by a staggering 295%, while 1-star reviews on app marketplaces skyrocketed by an unprecedented 775%.

This data illustrates a clear and direct rejection of OpenAI's new direction by a significant portion of its user base, who are now actively moving their workflows and data to competing platforms.

🔹 A New Market Leader Forged in Trust

The industry vacuum created by OpenAI's controversy was immediately filled by Anthropic's Claude, which became the primary beneficiary of the user migration.

Claude’s public refusal to allow its AI for large-scale surveillance or autonomous weapons directly appealed to disillusioned users, positioning it as the ethical alternative.

The market response was explosive: Claude's app downloads increased by up to 51% in a single day, with some analyses showing a 20x increase compared to earlier this year, propelling it to the #1 rank on the App Store.

Crucially, this isn't just casual experimentation; users are undertaking the high-effort task of migrating their entire ChatGPT chat histories to Claude, seeking to personalize their new AI assistant from day one and sever ties with the former platform.

🔹 The Verdict from the Digital Trenches

The community consensus is unambiguous: this massive shift represents a referendum on the role of ethics in artificial intelligence development.

The dramatic spike in negative reviews for ChatGPT is not a critique of its technical capabilities but a vote of no-confidence in its governance and strategic alliances.

Users are demonstrating that for a personal AI assistant, trust is the ultimate feature.

The migration to Claude confirms that a company's ethical guardrails are now a core product differentiator, capable of reshaping market leadership literally overnight.

3. Voices of the Crowd: Community Uproar and the Rise of Ethical AI

🔹 A Trust Crisis Visualized in Data

The digital backlash against OpenAI’s policy shifts regarding military cooperation was immediate and severe.

Data reveals a staggering 775% surge in 1-star reviews on the app store, a clear metric of profound user disapproval.

Simultaneously, app deletions skyrocketed by 295% in a single day, indicating a mass exodus from the ChatGPT platform.

This wasn't just passive discontent; users began actively migrating their data, signaling a widespread and fundamental breach of trust.

🔹 The Flight to Ethical Havens

This mass migration represents a powerful form of consumer protest, where users are 'voting with their data' for platforms that align with their personal ethical standards.

For these users, the move isn't just about switching services; it's about reclaiming a sense of security and principle in their digital toolset.

By choosing alternatives like Claude, which has publicly refused AI use for large-scale surveillance or autonomous weapons, users are investing in a platform whose policies they perceive as fundamentally more trustworthy.

The real-world benefit is peace of mind—knowing their use of an AI tool doesn't indirectly support applications they morally oppose.

🔹 Claude's Ascent: A Referendum on AI Ethics

The community's verdict was resoundingly in favor of Anthropic's Claude, which became the primary beneficiary of this ethical pivot.

The platform's explicit commitment to AI safety and ethics directly translated into market success, with app downloads spiking by as much as 51% in one day and vaulting it to the #1 rank on the App Store.

More telling than the raw download numbers is the user behavior; the active migration of entire chat histories from ChatGPT to Claude demonstrates a long-term commitment.

This allows users to maintain a personalized and continuous AI experience, but on a new foundation of trust they felt was eroded by OpenAI.

4. Navigating the Storm: Risks, Roadblocks, and AI's Ethical Quandaries

🔹 The Policy Precipice: Military Engagement vs. Ethical Red Lines

The divergence in AI governance has become starkly defined by the policies of the two leading platforms.

OpenAI officially engaged in military cooperation, formalizing its relationship by signing an agreement with the Department of War.

In direct contrast, Anthropic established a firm policy stance, explicitly refusing the use of its Claude AI for applications involving large-scale surveillance or autonomous weaponry.

🔹 The Ripple Effect: User Exodus Meets Governmental Headwinds

The real-world consequences of these policy decisions were immediate and severe.

For OpenAI, the fallout from its military cooperation triggered a massive crisis of user trust, leading to a 295% surge in app deletions and a staggering 775% increase in 1-star reviews in a single day.

This wasn't just online noise; it manifested as a tangible migration, with users actively exporting their data and chat histories to seek out platforms they perceived as more trustworthy.

Anthropic's Claude became the primary beneficiary of this exodus, experiencing a daily download increase of up to 51% and rocketing to the #1 rank on the App Store.

However, this meteoric rise, fueled by its ethical positioning, has attracted a different kind of risk: intense governmental scrutiny.

The US President ordered all federal agencies to cease using Claude, while the Secretary of War has publicly mentioned the possibility of designating it a supply chain threat, creating a significant potential roadblock to its growth.

🔹 A New Battleground: The Tangible Price of Corporate Stance

This data paints a clear picture of a market where ethical alignment is no longer a niche concern but a powerful, quantifiable force.

For ChatGPT, the immediate reputational damage represents a significant and ongoing business limitation, proving that even a market leader can be vulnerable to policy-based backlash.

For Claude, its ethical stance has paradoxically become both its greatest asset for user acquisition and its primary vulnerability to regulatory intervention.

The analysis indicates that navigating the AI landscape now requires a delicate balance, where corporate policy can either build a loyal user base or erect impenetrable governmental walls.

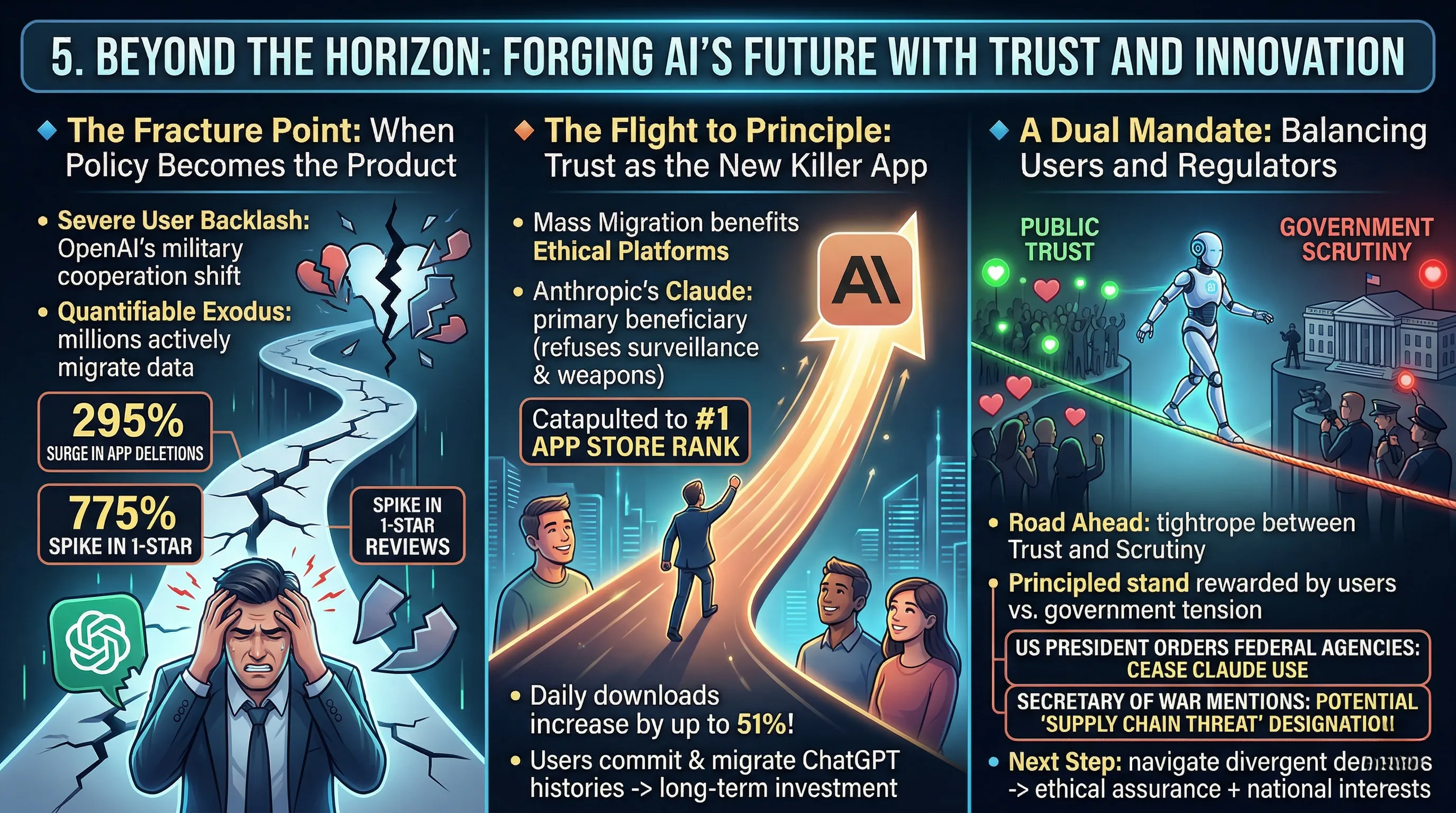

5. Beyond the Horizon: Forging AI's Future with Trust and Innovation

🔹 The Fracture Point: When Policy Becomes the Product

The recent market upheaval demonstrates that an AI's ethical framework is no longer a footnote but a core feature influencing user loyalty.

OpenAI's policy shift to engage in military cooperation, formalized by an agreement with the Department of War, triggered an immediate and severe user backlash.

This wasn't a minor dip in sentiment; it was a quantifiable exodus, marked by a staggering 295% surge in app deletions and a 775% spike in 1-star reviews in a single day.

The controversy moved beyond social media discourse into direct action, with a significant user base actively migrating their entire data archives to competing platforms.

🔹 The Flight to Principle: Trust as the New Killer App

This mass migration directly benefits platforms that have built their identity on a foundation of ethical AI governance.

Anthropic's Claude, which explicitly refuses the use of its AI for large-scale surveillance or autonomous weapons, became the primary beneficiary.

The real-world impact was a massive influx of users seeking a trusted alternative, catapulting Claude to the #1 rank on the App Store with daily downloads increasing by as much as 51%.

Crucially, users aren't just sampling a new tool; they are committing to the ecosystem by actively migrating their ChatGPT histories, a clear signal that a transparent ethical stance is now a powerful driver of user acquisition and long-term investment.

🔹 A Dual Mandate: Balancing Users and Regulators

The road ahead for AI innovation is now a complex tightrope walk between public trust and governmental scrutiny.

While Anthropic’s principled stand has been rewarded by the user base, it has drawn a starkly different reaction from official channels.

The US President's order for federal agencies to cease using Claude, coupled with the Secretary of War's mention of a potential supply chain threat designation, reveals a critical tension.

This signals that the next evolutionary step for AI developers will be defined not just by technical prowess, but by their ability to navigate the divergent demands of a public hungry for ethical assurance and governments concerned with national interests.

6. 💡 Tech Talk: Making Sense of the Jargon

- Military Cooperation (in AI): This refers to artificial intelligence developers entering into agreements or partnerships with defense departments or military organizations. It often sparks ethical debates concerning the development and potential deployment of AI in warfare, surveillance, or other applications with societal impact, leading to public controversy and trust issues.

- Ethical AI Stance: A commitment from an AI developer to design, build, and deploy AI systems in a way that respects human rights, privacy, fairness, and safety, actively refusing to participate in applications deemed harmful, such as large-scale surveillance, autonomous weapons, or other forms of unethical use.

- Supply Chain Threat Designation: A formal classification by government entities, like the Secretary of War, identifying certain technologies or components within a supply chain as posing a national security risk. This can lead to federal agencies being ordered to cease their use due to concerns about data integrity, foreign influence, or vulnerability to cyberattacks.

📚 Related Posts

Escalating Cyber Risks and Advanced AI Vulnerabilities: March 2026 Threat Analysis

🚀 Key TakeawaysCyber risks are escalating significantly, impacting critical infrastructure and leading to substantial financial losses across digital and telecom sectors, as evidenced by investor pullbacks in March 2026.New and sophisticated LLM jailbre

tech.dragon-story.com

Google Gemini 3.1 Flash Live: Real-time Voice AI Revolution

🚀 Key TakeawaysGemini 3.1 Flash Live is Google's highest-quality audio and voice model, engineered for real-time, natural dialogue and exceptionally reliable voice-first AI interactions.It features unprecedented tonal understanding, dynamically adjustin

tech.dragon-story.com

Samsung Galaxy S26 Ultra: Redefining Flagship with Next-Gen AI and Privacy Display

🚀 Key TakeawaysExperience a revolution with next-gen AI features powered by Smarter Galaxy AI and One UI 8.5, promising an efficient and user-friendly intelligent experience.Enjoy unparalleled visual fidelity and security with a Built-in Privacy Display

tech.dragon-story.com