🚀 Key Takeaways

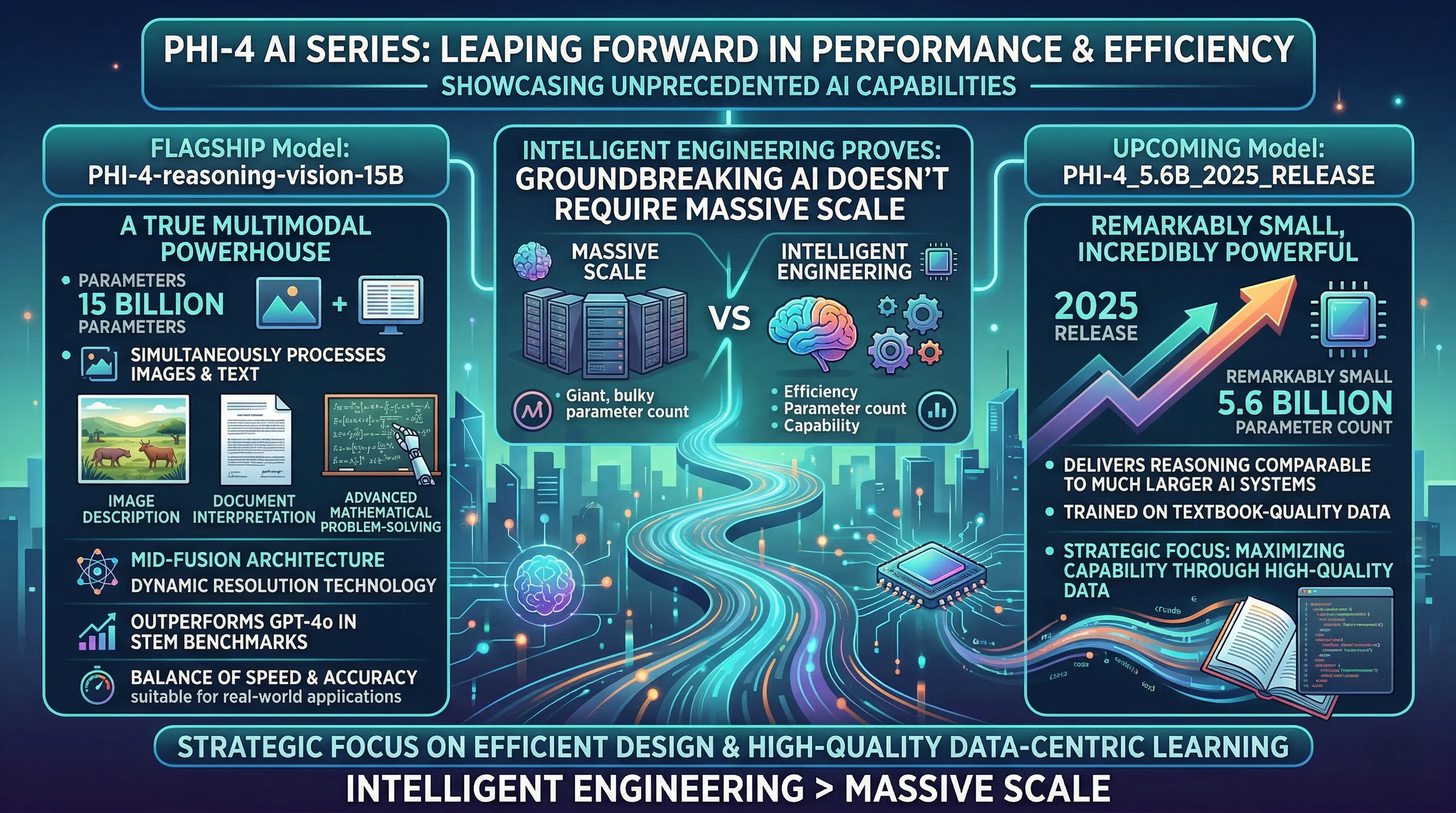

- The Phi-4 series redefines AI performance, achieving superior reasoning and multimodal capabilities with significantly fewer computational resources than industry giants, setting new standards for efficiency and accuracy.

The new Phi-4 AI series represents a significant leap forward in artificial intelligence, showcasing unprecedented performance and efficiency across a range of complex tasks.

The flagship Phi-4-reasoning-vision-15B model, with its 15 billion parameters, is a truly multimodal powerhouse, capable of simultaneously processing images and text to excel in areas like image description, document interpretation, and advanced mathematical problem-solving.

Its innovative mid-fusion architecture and dynamic resolution technology enable it to outperform models like GPT-4o in critical STEM benchmarks, demonstrating a superior balance of speed and accuracy suitable for real-world applications.

Beyond the 15B model, the upcoming Phi-4_5.6B_2025_Release further underscores the series' commitment to efficiency.

Despite its remarkably small 5.6 billion parameter count, this model is designed to deliver reasoning performance comparable to much larger AI systems, trained on textbook-quality data.

Both Phi-4 models highlight a strategic focus on maximizing capability through efficient design and high-quality data-centric learning, proving that groundbreaking AI doesn't always require massive scale, but rather intelligent engineering.

1. Engineering Marvel: Unpacking Phi-4's Revolutionary Architecture & Data Strategy

🔹 The Blueprint for Efficiency

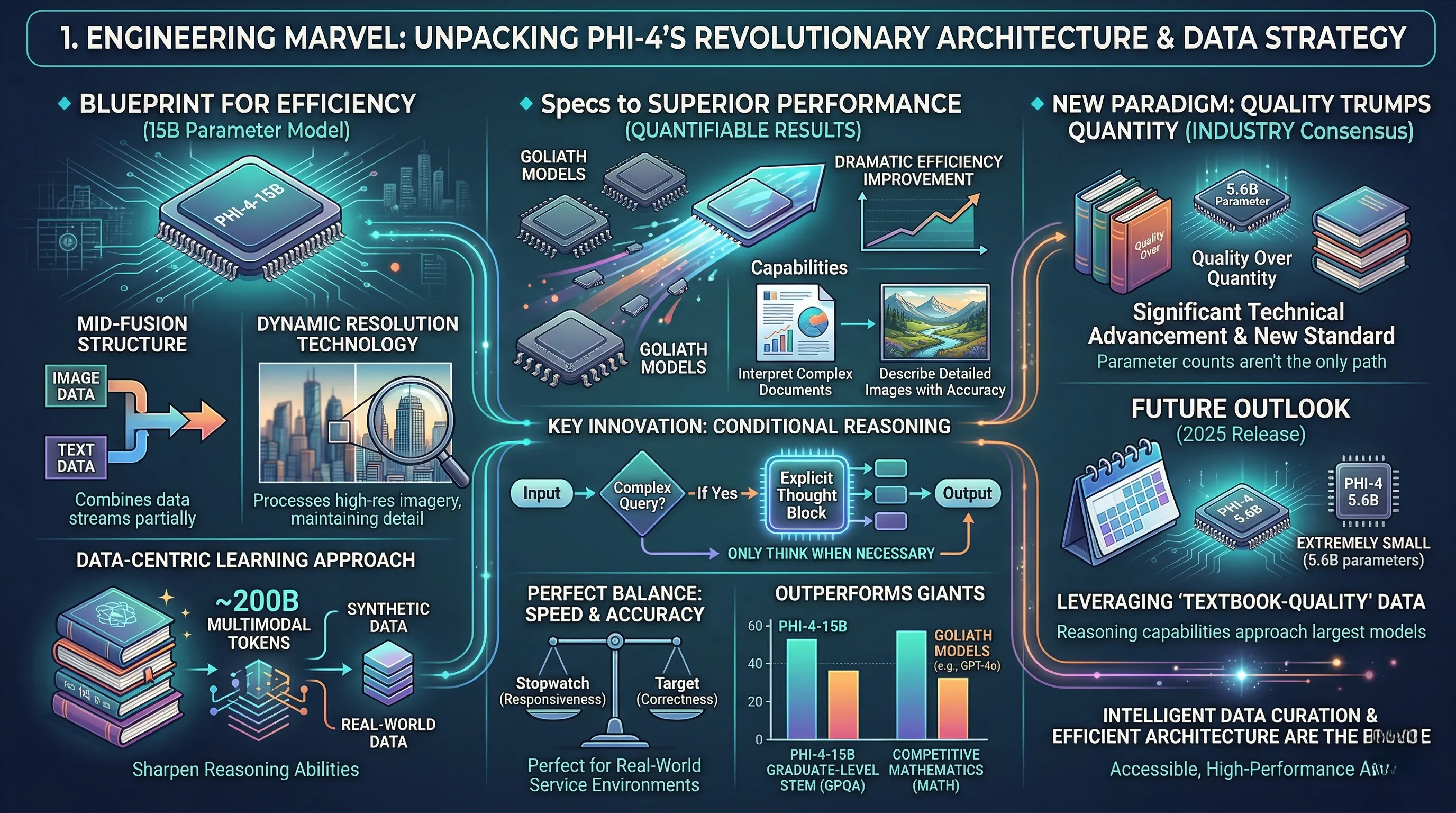

The Phi-4-reasoning-vision-15B model operates on a foundation of strategic design rather than sheer scale, featuring a lean 15 billion parameters.

Its core architecture integrates a mid-fusion structure, a sophisticated method for combining image and text data streams partway through the processing pipeline.

This is augmented by dynamic resolution technology, allowing the model to process high-resolution imagery without compromising detail.

Underpinning these systems is a high-quality, data-centric learning approach, trained on a curated dataset of approximately 200 billion multimodal tokens that judiciously combines synthetic and real-world data to sharpen reasoning abilities.

🔹 From Specs to Superior Performance

This architectural approach delivers a dramatic improvement in computational efficiency, enabling a 15B model to achieve performance comparable to, or even surpassing, much larger models.

The mid-fusion and dynamic resolution mean Phi-4 can interpret complex documents or describe detailed images with an accuracy that belies its smaller size.

Its most significant innovation is a structured, conditional reasoning system—a mechanism that allows the model to 'only think when necessary' by activating explicit 'thought' blocks for complex queries.

This creates an optimal balance between speed and accuracy, making it highly suitable for real-world service environments where responsiveness is as critical as correctness.

The results are quantifiable, with Phi-4-15B outperforming giants like GPT-4o in demanding graduate-level STEM (GPQA) and competitive mathematics (MATH) benchmarks.

🔹 The New Paradigm: Quality Trumps Quantity

The industry consensus is that the Phi-4 family represents a significant technical advancement, setting a new standard for AI performance by proving that resource-intensive parameter counts are not the only path to top-tier reasoning.

This philosophy is set to culminate in the 2025 release of Phi-4 5.6B, a model with an extremely small parameter count of just 5.6 billion.

By leveraging "textbook-quality" data, this upcoming model promises reasoning capabilities that approach those of today's largest models, cementing the strategy that intelligent data curation and efficient architecture are the future of accessible, high-performance AI.

2. The New AI Arms Race: How Phi-4 is Reshaping the Competitive Landscape

🔹 Benchmark Supremacy: Punching Above Its Weight Class

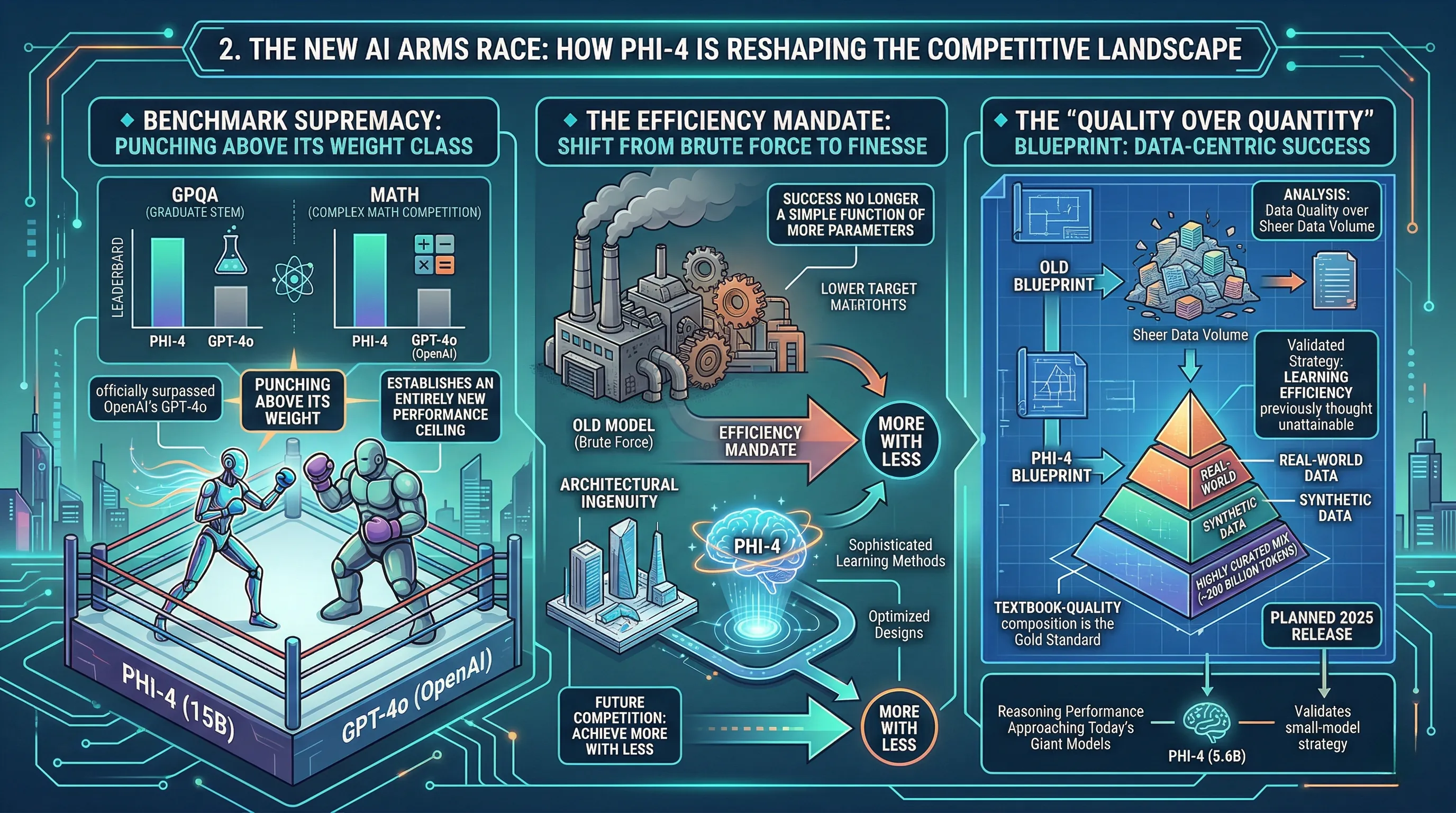

The competitive AI landscape has been fundamentally altered by the performance of Phi-4-reasoning-vision-15B.

Despite its relatively lean 15-billion-parameter architecture, the model has officially surpassed OpenAI's formidable GPT-4o in two of the industry's most challenging benchmarks.

Specifically, Phi-4 has demonstrated superior capability on GPQA, a benchmark consisting of graduate-level STEM questions, and MATH, which measures performance on complex math competition problems.

This achievement is not an incremental improvement; it establishes an entirely new performance ceiling for multimodal AI systems, directly challenging the long-held belief that only massive-scale models can lead in complex reasoning tasks.

🔹 The Efficiency Mandate: Shifting from Brute Force to Finesse

This superior benchmark performance forces a strategic reckoning among all major AI developers.

The success of Phi-4 proves that achieving state-of-the-art results is no longer a simple function of adding more parameters and computational power.

Competitors are now facing a new reality where efficiency and architectural ingenuity are the primary drivers of success.

This puts immense pressure on rivals to move beyond brute-force scaling and invest in more sophisticated, data-centric learning methods and optimized designs, like Phi-4's conditional reasoning, which balances speed and accuracy for real-world service environments.

The clear implication is that future competition will be defined by the ability to achieve more with less—less data, fewer parameters, and significantly less computational overhead.

🔹 The "Quality Over Quantity" Blueprint for the Industry

The analyst consensus is that Phi-4's success provides a new blueprint for the entire industry, centered on the principle of data quality over sheer data volume.

By leveraging a highly curated mix of synthetic and real data totaling approximately 200 billion tokens, the model has demonstrated a learning efficiency previously thought unattainable.

This strategy is further validated by the planned 2025 release of the even smaller 5.6-billion-parameter Phi-4 model, which promises reasoning performance approaching that of today's giant models.

Competitors are now compelled to re-evaluate their entire data curation and training pipelines, recognizing that the new gold standard is not the size of the dataset, but its "textbook-quality" composition.

3. Echoes of Genius: What Experts and the Community Are Saying About Phi-4's Impact

🔹 The Benchmark Disruption

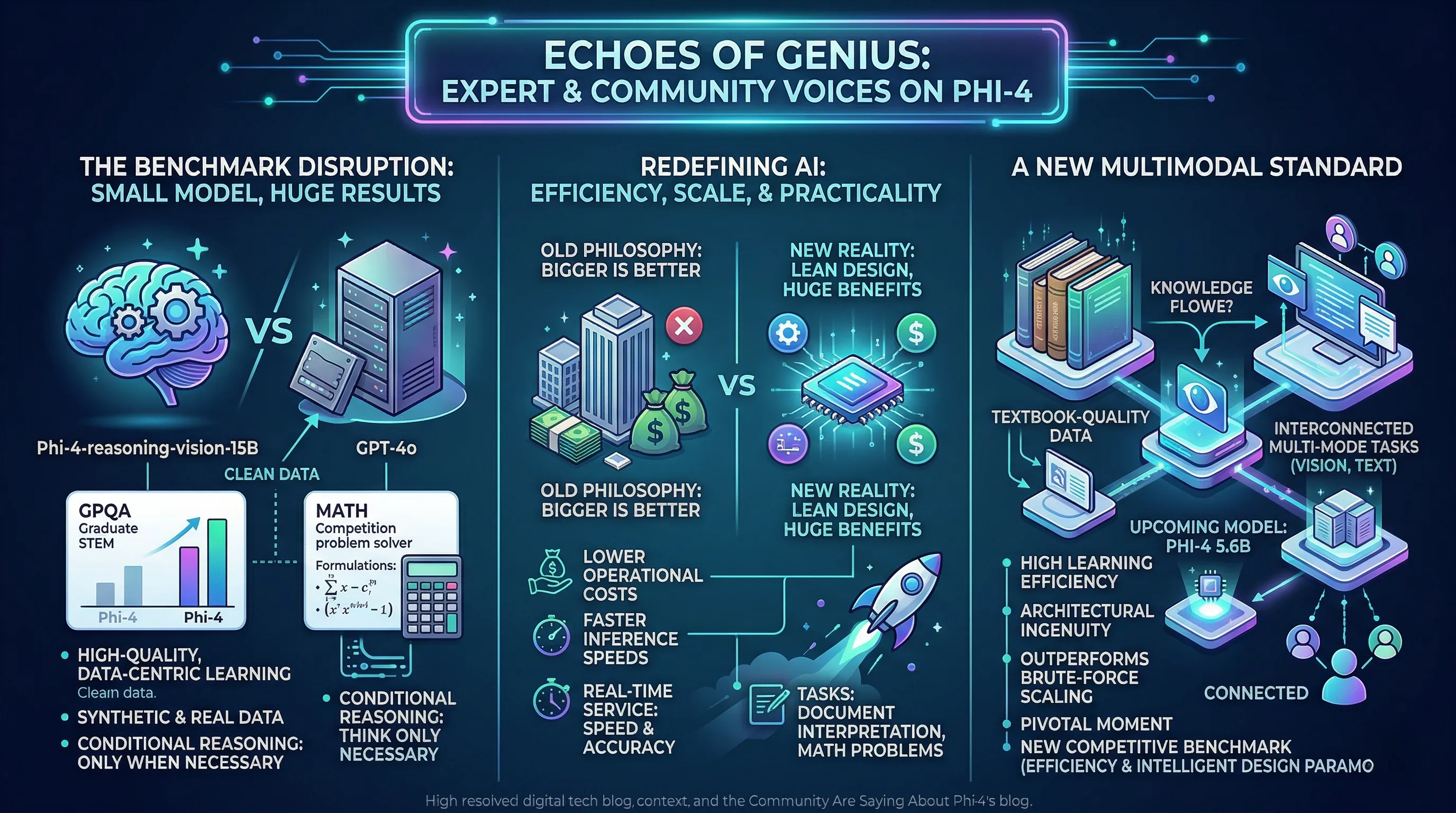

Initial analysis of Phi-4-reasoning-vision-15B has centered on its astonishing benchmark performance.

Despite its relatively modest 15-billion parameter size and training on approximately 200 billion multimodal tokens, the model has officially surpassed the much larger GPT-4o in two critical academic domains: the GPQA benchmark for graduate-level STEM questions and the MATH competition benchmark.

This achievement was engineered through a high-quality, data-centric learning method that combines synthetic and real data to specifically enhance its scientific and mathematical reasoning capabilities.

Experts point to its architecture, which employs a "conditional reasoning" approach, as a key factor, allowing the model to 'think' only when necessary to solve a problem.

🔹 Redefining AI Scalability and Practicality

The real-world implication of this performance is a fundamental challenge to the industry's "bigger is better" philosophy.

By achieving superior results with significantly fewer computational resources and less data, Phi-4 demonstrates a new path toward AI efficiency that has profound economic and practical benefits.

This lean design translates directly into lower operational costs and faster inference speeds, making top-tier AI performance viable for deployment in real-time service environments where both speed and accuracy are non-negotiable.

The ability to process complex visual and text-based tasks, from interpreting documents to solving math problems, without the overhead of massive models, dramatically lowers the barrier to entry for advanced AI applications.

🔹 A New Standard for Multimodal Performance

The consensus among industry analysts is that the Phi-4 series represents a significant technical advancement, effectively setting a new standard for what is possible in AI development.

The series is lauded for its high learning efficiency, proving that architectural ingenuity and a focus on "textbook-quality" data can outperform brute-force scaling.

This narrative is further reinforced by the upcoming Phi-4 5.6B model, which promises to deliver reasoning performance comparable to today's giant models from an extremely small parameter count.

Collectively, the community views this as a pivotal moment, establishing a new competitive benchmark where efficiency and intelligent design are paramount to success in multi-mode tasks.

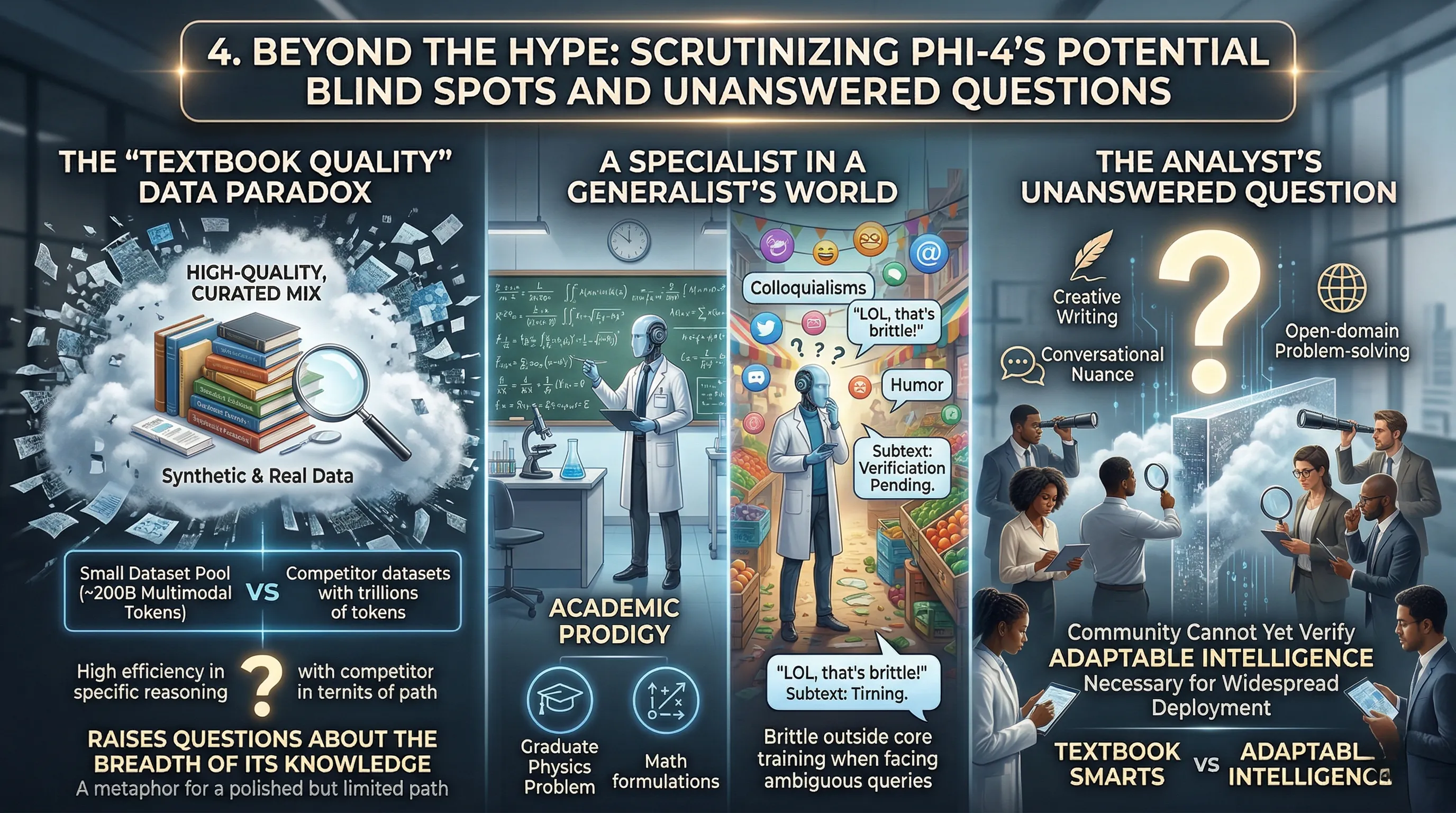

4. Beyond the Hype: Scrutinizing Phi-4's Potential Blind Spots and Unanswered Questions

🔹 The "Textbook Quality" Data Paradox

The official documentation highlights Phi-4's training on "textbook-quality high-quality data" and a specialized mix of synthetic and real data sources.

This totals approximately 200 billion multimodal tokens for the 15B vision model, a figure significantly smaller than datasets used for competing large-scale models.

While this data-centric learning method is credited for the model's high efficiency and strong reasoning in specific domains, it raises critical questions about the breadth of its knowledge.

🔹 A Specialist in a Generalist's World

A model heavily optimized on academic and synthetic data could possess world-class knowledge in science and mathematics while lacking the nuanced, messy "common sense" gleaned from a broader trawl of the internet.

This could translate into a user experience where Phi-4 flawlessly solves a graduate-level physics problem but struggles to understand colloquialisms, humor, or the subtext of a business email.

The risk is creating an AI that is an academic prodigy but lacks the versatile, real-world intuition required for a truly general-purpose assistant, potentially making it brittle when faced with ambiguous or culturally-specific queries outside its core training.

🔹 The Analyst's Unanswered Question

The central question circulating among industry analysts is one of generalization.

While its performance in structured benchmarks like GPQA and MATH is undeniably impressive, this narrow focus leaves a significant blind spot regarding its capabilities in creative writing, conversational nuance, and open-domain problem-solving.

The consensus is that without broader benchmark data, the community cannot yet verify if Phi-4's "textbook smarts" translate into the adaptable intelligence necessary for widespread, consumer-facing deployment.

5. The Horizon Ahead: Phi-4's Vision for AI's Next Evolution

🔹 The Blueprint for Miniaturization

The developmental trajectory of the Phi-4 series charts a deliberate course away from the industry's long-held philosophy of brute-force scaling.

Microsoft's strategy is crystallized in the upcoming Phi-4 5.6B, a model slated for a 2025 release with an exceptionally lean 5.6 billion parameters.

This follows the precedent set by the Phi-4-reasoning-vision-15B, which already demonstrated dramatic efficiency gains and superior performance on benchmarks like GPQA against much larger models.

The core technical assertion is that a model trained meticulously on "textbook-quality" data can achieve reasoning capabilities comparable to foundational models with hundreds of billions of parameters.

🔹 From Cloud Mainframes to Local Devices

The real-world implication of this trend is the profound democratization of powerful AI.

An extremely compact yet potent model like Phi-4 5.6B fundamentally alters where and how advanced AI can be deployed.

This isn't just about reducing server costs; it's about enabling sophisticated reasoning directly on consumer hardware like laptops and smartphones, or in resource-constrained edge computing environments such as factory floors and remote research stations.

This shift promises a new class of applications that can operate with lower latency, enhanced privacy by keeping data local, and without the prerequisite of a constant, high-bandwidth connection to the cloud.

🔹 A Paradigm Shift Towards Efficiency

The industry consensus forming around the Phi-4 series is that it represents a significant pivot towards architectural intelligence and data curation over raw computational might.

The success of these smaller models validates the hypothesis that the quality of training data and efficient design—such as Phi-4's mid-fusion structure and conditional reasoning—are becoming more critical differentiators than parameter count alone.

This "smarter, not bigger" approach signals a more sustainable and accessible future for AI development, where innovation is measured not by the scale of the model, but by the efficiency with which it achieves its results.

6. 💡 Tech Talk: Making Sense of the Jargon

- Multimodal: An AI's ability to process and understand different types of data inputs at the same time, such as images, text, and even audio.

Think of it like a person who can understand a conversation by both listening to what's said and looking at facial expressions and body language simultaneously. - Mid-fusion structure: A specific architectural design in AI models where information from different modalities (like images and text) is combined and processed together at an intermediate stage of the model.

Imagine baking a cake where you mix the wet and dry ingredients together at just the right moment, not all at once at the start or only at the very end, to get the best texture. - Dynamic resolution technology: An advanced technique that allows the AI to intelligently adjust the level of detail or "zoom" on images it processes, focusing on important areas at high resolution while maintaining efficiency for less critical parts.

It's like a smart photographer who can zoom in on the important details of a picture when needed, but also quickly scan the whole scene without processing every tiny pixel, saving time and resources. - Conditional reasoning: A smart reasoning approach where the AI only engages in complex, deep thinking computations when a task truly demands it, rather than overthinking every input.

Picture a student who only studies extra hard for truly difficult exam questions, but quickly answers the easy ones without unnecessary effort, balancing speed with accuracy.

📚 Related Posts

Escalating Cyber Risks and Advanced AI Vulnerabilities: March 2026 Threat Analysis

🚀 Key TakeawaysCyber risks are escalating significantly, impacting critical infrastructure and leading to substantial financial losses across digital and telecom sectors, as evidenced by investor pullbacks in March 2026.New and sophisticated LLM jailbre

tech.dragon-story.com

Google Gemini 3.1 Flash Live: Real-time Voice AI Revolution

🚀 Key TakeawaysGemini 3.1 Flash Live is Google's highest-quality audio and voice model, engineered for real-time, natural dialogue and exceptionally reliable voice-first AI interactions.It features unprecedented tonal understanding, dynamically adjustin

tech.dragon-story.com

Samsung Galaxy S26 Ultra: Redefining Flagship with Next-Gen AI and Privacy Display

🚀 Key TakeawaysExperience a revolution with next-gen AI features powered by Smarter Galaxy AI and One UI 8.5, promising an efficient and user-friendly intelligent experience.Enjoy unparalleled visual fidelity and security with a Built-in Privacy Display

tech.dragon-story.com